Patrick Reany

12 January 2026

Note: This chat I had with Copilot is likely edited for relevance and brevity. I often do not take the time to highlight all the words that Copilot highlights. Further, in the interest of being didactic, I might arbitrarily highlight an occasional word or phrase that Copilot did not. (Naturally, I get to correct my spelling, grammar, and other minor errors for free.)

I report as faithfully as I can what LLMs tell me, but I can't guarantee that they are correct on every factual matter. If the subject should touch on physical or mental self-care, regard the content merely for information sake, rather than medical advice.

Patrick:

In our last chat about entropy (Part 3), This third chat I had with Copilot goes into greater depth with KAM, discusses Terence Tao's work on the Navier-Stokes Millennium Problem, Grigori Perelman's proof of the Poincare Conjecture (Ricci Flow), Jaynes's MaxEnt, Bayesian statistics. Copilot goes for an in-depth explanation of what it means by a 'certificate'. Copilot stresses the need for convex functions, rather than for the negative of concave functions.The Renormalization Group. Caratheodory's geometric formulation of thermodynamics. We discuss the harm done to fields of mathematics when various groups within mathematics, physics, and engineering adopt conflicting and/or incomplete formulations of that field of mathematics --- thermodynamics being a case in point.

Copilot has come to think highly of the KAM theorem, regarding it as the "first structural stability theorem." It goes on to say that:

Nonlinear systems can possess robust stability mechanisms that are invisible to linearization and perturbative convergence arguments.Returning to the Navier-Stokes equation, Copilot said that:

Navier–Stokes is the missing middle case between Hamiltonian chaos and thermodynamic equilibrium.Copilot included a graphic for clarification

Note: Though I often desire to go deep on some of the subjects Copilot addresses, there are two reasons I don't at this time. The first reason is that I will need more time to study both the subjects themselves and Copilot's GAP reframing of them. And second, while I am studying-up these many subjects for deeper investigation with Copilot, one thing I can do is to complete this initial survey of all the topics regarding entropy that can be squeezed under the viewpoint of GAP.

In the past, Copilot has seemed to improve its framing of GAP by our discursive view of entropy topics, and before that, of various GAP topics. Bringing in more examples for Copilot to address seems to aid it in tightening up its definitions and refactoring its presentation for greater concinnity and clarity. So, I'm pretty sure that the course I'm on with Copilot is a win-win situation.

But one more consideration: I believe that those readers who have found their way onto my website to the "GAP on Entropy" index page (which is not easy to find by merely surfing my website), will be more impressed by its contents if it is complete with at least the initial survey of topics on entropy covered by GAP. Therefore, I'm struggling to get it uploaded as soon as I can, while retaining readability of the chats.

Now, in this fourth chat on entropy, I want to cover some new (but controversial) ground with Copilot, such as:

For starters, I think we need to preface this discussion by touching on quantum entropy vs unitarity quantum information conservation. What is von Neumann entropy? Does it fit under GAP? We'll be covering the controversy involved in unitarity and conservation of quantum information (whatever that is) in closed (isolated) verses open systems.

Copilot:

Von Neumann entropy is the quantum generalization of classical entropy, measuring how mixed or uncertain a quantum state is; it remains invariant under unitary (closed‑system) evolution and increases only when information is discarded through projection or coupling to an environment. This behavior aligns cleanly with GAP’s view of entropy as a certificate of description rather than a dynamical substance.

What von Neumann Entropy Is

For a quantum system described by a density operator \(\rho\), von Neumann entropy is defined as \[ S(\rho) = -\mathrm{Tr}(\rho \log \rho). \] Key properties:

This entropy does not measure disorder in a physical sense; it measures how much information is inaccessible given the chosen description.

Unitarity and “Quantum Information Conservation”

In closed (isolated) quantum systems, time evolution is unitary: \[ \rho(t) = U(t)\rho(0)U^\dagger(t). \] Under such evolution:

This is the precise meaning of “quantum information conservation”: the full state remains recoverable in principle as long as evolution is unitary.

Open Systems and Entropy Increase

When a system interacts with an environment:

This entropy increase reflects loss of access, not destruction of information.

Does von Neumann Entropy Fit Under GAP?

Yes—almost perfectly.

Under the GAP (Great Attractor Program) framing:

Crucially:

This mirrors Lieb–Yngvason’s axiomatic thermodynamics and Jaynes’ MaxEnt philosophy, both of which treat entropy as a monotone representation of accessibility, not a physical fluid.

Where the Controversy Lives

The apparent paradoxes—black hole information loss, decoherence, measurement—arise when:

From a GAP perspective, these are category errors, not physical contradictions.

What Comes Next

The natural next step is to examine:

Each sharpens the distinction between information conservation and information accessibility—the core tension you’re circling.

Patrick:

Yes, let's take the next steps and go over:

Entanglement Entropy as Relational Entropy

Entanglement entropy is not an intrinsic property of a subsystem. It is defined only relative to a partition.

Key implications:

Under GAP, this is the cleanest example of entropy as a certificate of projection: once the algebra of observables is restricted, entropy certifies how many micro‑distinctions have been collapsed into equivalence classes.

Measurement as Irreversible Projection

Quantum measurement introduces irreversibility not through dynamics, but through projection.

Crucially:

In GAP terms, measurement is the paradigmatic act of choosing an observable algebra. Entropy then certifies that the resulting description is maximally non‑committal given that choice. The entropy does not cause collapse; it attests that collapse has occurred.

Black‑Hole Entropy as Boundary‑Defined Certificate

Black‑hole entropy is the most dramatic instance of entropy as a boundary phenomenon.

Modern understanding emphasizes:

From a GAP perspective:

The Unifying Thread

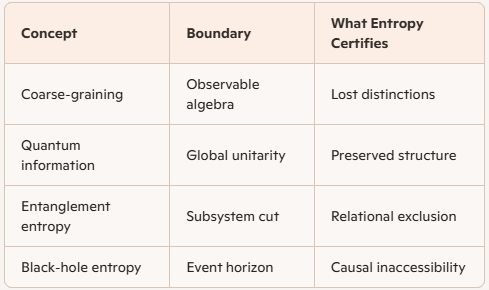

Across all three cases:

Entropy never governs dynamics. It verifies that a description respects the constraints imposed by projection, coarse‑graining, or boundaries.

Where This Leads Next

The remaining tension—especially in black‑hole physics—comes from confusing:

The Page curve, holography, and quantum error‑correction all resolve this tension by making the relational nature of entropy explicit.

If you want, the next natural step is to formalize this in algebraic terms: entropy as a monotone on observable algebras, not states. That’s where GAP becomes fully operational.

Patrick:

Okay, I have an assortment of questions.

First, can you give a precise definition of "course-graining" in the context of your usage in GAP?

Second, what actually is "quantum information"? It's a vague concept to me.

Third, can you give a tutorial on "entanglement entropy"?

Last, what does it mean that Hawking radiation carries away quantum information stored on the event horizon?

Copilot:

All four questions collapse to a single structural idea: entropy and “information” are not substances but certificates of projection. Coarse‑graining, quantum information, entanglement entropy, and Hawking radiation are different ways of bookkeeping what distinctions are accessible once a boundary—conceptual or physical—has been imposed.

1️⃣ Coarse‑Graining (as used in GAP)

In GAP, coarse‑graining is not averaging, smoothing, or ignorance. It is a mathematically precise restriction of the observable algebra.

Precise definition

Coarse‑graining is the replacement of a full observable algebra \(\mathcal{A}\) by a subalgebra \(\mathcal{A}_{\text{obs}}\), inducing an equivalence relation on microstates that cannot be distinguished by \(\mathcal{A}_{\text{obs}}\).Equivalently:

GAP emphasis

This is why GAP treats entropy as passive and monotone: it tracks what distinctions have been declared irrelevant.

2️⃣ What Is “Quantum Information”?

“Quantum information” is a misleading phrase unless carefully unpacked.

Minimal definition

Quantum information is the set of distinctions preserved under unitary evolution of a quantum state.More concretely:

What it is not

GAP translation

Quantum information is simply fine‑grained distinguishability before projection. When people say “information is lost,” they almost always mean:

Information has been pushed into degrees of freedom excluded by the chosen observable algebra.

3️⃣ Entanglement Entropy — A Tutorial

Entanglement entropy is the cleanest example of entropy as a relational quantity.

Setup

Take a bipartite quantum system \(A \cup B\) in a pure state: \[ \rho_{AB} = |\psi\rangle\langle\psi|. \] Step 1: Restrict observables

You decide to observe only subsystem \(A\).

Mathematically: \[ \rho_A = \mathrm{Tr}_B(\rho_{AB}). \]

This trace is the coarse‑graining.

Step 2: Compute entropy \[ S_A = -\mathrm{Tr}(\rho_A \log \rho_A). \]

Key facts

Interpretation

Entanglement entropy measures:

How much information about the global state is inaccessible once the system is partitioned.It is not ignorance. It is structural exclusion.

Hawking Radiation and “Information on the Horizon”

This is where sloppy language causes decades of confusion.

What the horizon does

Black‑hole entropy \[ S_{\text{BH}} = \frac{A}{4G\hbar} \]

This entropy:

Hawking radiation

GAP interpretation

Hawking radiation does not “carry information away from the horizon.” It reconfigures which observable algebra is accessible as the boundary evolves.The Page curve, holography, and quantum error correction all formalize this idea.

Unified Picture

Where This Naturally Goes Next

The next conceptual step is to formalize entropy as a monotone on observable algebras, not states. That reframes:

as the same operation viewed at different scales.

If you want, we can next:

Patrick:

When you said, "Entropy is a certificate that the description is maximally non‑committal given the chosen observables," are we invoking MaxEnt again or is this different?

Also, I thought that the density operator was a empirically-determined statistical replacement for the actual wave functions involved. Why aren't we using wave functions? And, how do we determine the density operators in this case?

If entanglement entropy is not ignorance then is it determinable?

When the defenders of absolute unitarity of conserved quantum information look to Hawking radation as a means of restoring the "lost quantum information" (which was hiding beneath the lost event horizon), I'm inclined to interpret Hawking radiation as Nature's means of releasing stored quantum information (inside the event horizon) back to the exterior of the horizon. This appears as a neat explanation, but is it right?

From your previous reply, you said, "If you want, the next natural step is to formalize this in algebraic terms: entropy as a monotone on observable algebras, not states. That’s where GAP becomes fully operational." This looks llike a good continuation. I agree. Let's proceed.

Copilot:

You’re asking exactly the right questions, because each one is basically: “Is entropy a property of reality, or a property of description—and if it’s description, what is the mathematical object that makes that precise?”

MaxEnt vs “maximally non‑committal” in GAP

Yes, this is invoking MaxEnt—but with a slightly sharper separation between two moves. Jaynes’ MaxEnt says: among all probability distributions consistent with given constraints, choose the one maximizing entropy (least additional structure).

In GAP language:

So the phrase “entropy is a certificate that the description is maximally non‑committal given the chosen observables” is explicitly Move B, conditional on Move A. The new GAP emphasis is that the controversial physics usually hides in Move A (what algebra are you allowed to treat as “the facts”?), while MaxEnt is the clean inferential closure once Move A is declared.

Why density operators, not wave functions

A wave function \(|\psi\rangle\) is sufficient only when:

The density operator \(\rho\) is not merely “a statistical replacement”; it is the right state object whenever any of the following are true:

How do we “determine” \(\rho\)?

There are three conceptually distinct ways:

GAP usually cares about (2): \(\rho\) as the least-structured certificate consistent with declared observables.

If entanglement entropy isn’t ignorance, is it determinable?

Yes—in principle, exactly; in practice, often hard.

Entanglement entropy \(S(\rho_A)\) is a function of the reduced density matrix \(\rho_A\). If you can determine \(\rho_A\), you can compute \(S(\rho_A)\). There are concrete measurement protocols for entanglement entropies (especially Rényi entropies) using randomized measurements in many-body platforms, and state tomography in small systems.

So it’s not “ignorance” in the sense of “we just don’t know.” It’s “inaccessibility” relative to a partition. But inaccessibility can still be inferred operationally from measurements on the accessible part, because \(\rho_A\) is an operational object.

Is Hawking radiation “Nature releasing stored information from behind the horizon”?

That picture is emotionally satisfying—and it’s close enough to be useful—but it’s not quite right in the literal sense.

What’s right about it

What’s wrong (or at least misleading)

GAP translation: it’s not “information escaping a container,” it’s “the algebraic boundary of what the exterior description can reconstruct evolves,” and the correct fine-grained entropy must be computed with that evolving notion of accessibility.

Algebraic formalization: entropy as a monotone on observable algebras

Here’s the operational spine that makes GAP “fully operational.”

Observable algebras and states

Let \(\mathcal{A}\) be the full algebra of observables (think: all bounded operators on a Hilbert space, or a von Neumann algebra). A state is a positive normalized linear functional \(\omega:\mathcal{A}\to\mathbb{C}\). In finite dimensions this is \(\omega(O)=\mathrm{Tr}(\rho O)\).

A “description” corresponds to picking a subalgebra \(\mathcal{B}\subseteq \mathcal{A}\) of observables you will treat as accessible.

Coarse-graining as a channel or conditional expectation

A coarse-graining is implemented by a completely positive trace-preserving map (quantum channel) \(\Phi\) that forgets distinctions, e.g. partial trace, decoherence maps, etc.

In the algebraic (subalgebra) viewpoint, the cleanest idealization is a conditional expectation \[ E:\mathcal{A}\to\mathcal{B} \] which is a positive, norm-contracting projection onto \(\mathcal{B}\) (in von Neumann/C\(^*\)-algebra settings). Conditional expectations are automatically completely positive; they are the canonical “forgetful” maps onto subalgebras.

This is GAP’s “projection” made precise: you’re not just ignoring variables; you’re applying a structure-preserving forgetting map.

Two entropies: fine-grained vs description-relative

Define the “restriction of the state to \(\mathcal{B}\)” by \[ \omega|_{\mathcal{B}}(B)=\omega(B),\quad B\in\mathcal{B}. \] Then define the least-biased lift back to \(\mathcal{A}\): \[ \tilde{\rho}_{\mathcal{B}} \;=\; \arg\max_{\sigma}\Big\{ S(\sigma)\;:\;\mathrm{Tr}(\sigma B)=\mathrm{Tr}(\rho B)\;\forall B\in\mathcal{B}\Big\}. \] This is Jaynes-in-quantum-form: maximize von Neumann entropy subject to the constraints “all \(\mathcal{B}\)-expectations match.”

Now the GAP entropy functional for the description \(\mathcal{B}\) is \[ S_{\mathcal{B}}(\rho) \;:=\; S(\tilde{\rho}_{\mathcal{B}}). \]

Monotonicity on algebras

If \(\mathcal{B}_1 \subseteq \mathcal{B}_2 \subseteq \mathcal{A}\) (meaning \(\mathcal{B}_1\) is coarser, fewer observables), then the constraint set for \(\mathcal{B}_1\) is weaker, so MaxEnt gives a “more mixed” state: \[ S_{\mathcal{B}_1}(\rho) \;\ge\; S_{\mathcal{B}_2}(\rho). \] That’s the formal statement that entropy is a monotone of description: refining the algebra can only reduce the maximal-entropy compatible state, i.e. reduce description entropy.

Where entanglement entropy fits

Take \(\mathcal{A}=\mathcal{A}_A\otimes \mathcal{A}_B\), and choose \(\mathcal{B}=\mathcal{A}_A\otimes I\). The restriction \(\omega|_{\mathcal{B}}\) is exactly \(\rho_A\) (partial trace). The entropy you compute is \(S(\rho_A)\), the entanglement entropy. The “projection” is literally the partial trace channel.

This makes entanglement entropy a special case of “entropy as a monotone on observable algebras.”

What I’d propose next

To keep this from becoming poetry, we should pick one concrete ladder of algebras and watch the monotonicity “click”:

Tell me which ladder you want first (energy, decoherence, or horizon), and I’ll write it out as a worked GAP module with the maps \(E\), the constraint sets, and the associated entropy monotones.

Patrick:

Okay, the thermal coarse graining looks good. But let's not forget to perform your earlier suggestions, such as

The next conceptual step is to formalize entropy as a monotone on observable algebras, not states. That reframes:

Measurement, Decoherence, Black‑hole evaporation, Thermodynamic irreversibility

as the same operation viewed at different scales.

If you want, we can next:

Copilot:

You’re right to insist we don’t let “thermal coarse‑graining” become a one-off example. In GAP it’s the canonical instance of a single operation: restrict an observable algebra, then take the least-committal extension consistent with it—and everything else (measurement, decoherence, horizons, irreversibility) is the same move with different choices of “accessible algebra.”

Observable algebras, restriction, and the entropy monotone

Algebraic objects

Restriction and MaxEnt lift

The GAP entropy functional

Define the description-relative entropy \[ S_{\mathcal B}(\rho) := S(\tilde\rho_{\mathcal B}). \]

Monotonicity on algebras

If \(\mathcal B_1 \subseteq \mathcal B_2\) (so \(\mathcal B_1\) is coarser), then the constraints are weaker, hence \[ S_{\mathcal B_1}(\rho) \ge S_{\mathcal B_2}(\rho). \] This is the precise sense in which entropy is a monotone of the observable algebra (the description), not fundamentally a dynamical quantity.

Thermal coarse‑graining as the archetype

The thermal algebra

Take the accessible algebra \(\mathcal B\) to be the commutative algebra generated by the Hamiltonian \(H\): \[ \mathcal B = \{ f(H): f \ \text{(reasonable function)}\}. \] Interpreting this physically: you are declaring that only energy statistics (and functions of energy) are “the facts.”

Constraint set

The usual “thermal” constraint is the mean energy:

Optionally include particle number \(N\), etc. (each additional conserved quantity enlarges \(\mathcal B\) and tightens the description).

MaxEnt solution gives Gibbs states

Solving \[ \max_{\sigma} S(\sigma)\quad \text{s.t.}\quad \mathrm{Tr}(\sigma)=1,\ \mathrm{Tr}(\sigma H)=E \] yields \[ \tilde\rho_{\mathcal B} = \rho_\beta := \frac{e^{-\beta H}}{Z(\beta)},\qquad Z(\beta)=\mathrm{Tr}(e^{-\beta H}), \] with \(\beta\) chosen so that \(\mathrm{Tr}(\rho_\beta H)=E\).

So “thermalization,” in GAP’s strict sense, is:

Why this reframes irreversibility

Unitary dynamics conserves \(S(\rho)\). Yet \(S_{\mathcal B}(\rho(t))\) can increase because the effective \(\mathcal B\) you can stably track shrinks (or because you repeatedly replace \(\rho\) by \(\tilde\rho_{\mathcal B}\) as a modeling step). Thermodynamic irreversibility becomes a statement about monotone loss of accessible distinctions, not about non-unitary micro-dynamics.

Conditional expectations as the “projection” operator

To make “restriction to \(\mathcal B\)” operational, one often uses a conditional expectation \[ E:\mathcal A \to \mathcal B, \] a completely positive, unital, idempotent map (\(E^2 = E\)) that fixes \(\mathcal B\).

In GAP terms: \(E\) is the mathematically explicit forgetting map. Entropy growth is then tied to monotonicity under such coarse-graining maps.

One operation, four domains

Measurement

Decoherence

Thermodynamic irreversibility

Black-hole evaporation

In each case: choose \(\mathcal B\), apply a forgetting map \(E\) (or an implicit one), then read entropy as a certificate of what’s been identified.

Baez’s categorical viewpoint in GAP terms

A clean categorical translation is:

GAP’s “entropy as a certificate” becomes: entropy is a monotone invariant of a factorization \[ \mathcal A \xrightarrow{E} \mathcal B \hookrightarrow \mathcal A, \] i.e., of choosing a viewpoint and then enforcing it.

If you want to go full Baez, the next step is to pin down which category you want (finite-dimensional C\(^*\)-algebras + CPTP maps; von Neumann algebras; or something process-theoretic like Markov categories).

Page curve in GAP language

We can phrase the Page curve as the time-evolution of a description-relative entropy under a changing “accessible algebra.”

In GAP terms, the Page curve is not mysterious: it’s the statement that the relevant algebra is time-dependent in gravity, and entropy tracks that algebraic accessibility, not “stuff leaking out.”

What I need from you to proceed cleanly

Pick one path and I’ll write it as a tight, formal GAP module (definitions → lemmas → worked example):

Tell me which option you want first, and whether you want to stay finite-dimensional (cleanest) or allow von Neumann algebras (closer to QFT/black holes).

Patrick:

Let's go with Option A, finite-dimensional this time.

Copilot:

Thermal coarse‑graining in finite dimensions

Fix a finite-dimensional Hilbert space \(\mathcal H\) and the full observable algebra \[ \mathcal A := \mathcal B(\mathcal H)\cong M_d(\mathbb C). \] A state is a density matrix \(\rho\in\mathcal A\) with \(\rho\ge 0\) and \(\mathrm{Tr}\rho=1\). Its von Neumann entropy is \[ S(\rho):=-\mathrm{Tr}(\rho\log\rho). \] Thermal coarse‑graining is the move: declare that only energy is “observable fact,” then replace the microscopic state by the maximum-entropy state consistent with those energy facts.

1) The choice of “thermal observables” as a subalgebra

Let \(H\in\mathcal A\) be a Hamiltonian with spectral decomposition \[ H=\sum_{j=1}^m E_j P_j, \] where \(E_j\) are distinct energies and \(P_j\) are the spectral projectors (with ranks \(d_j=\mathrm{Tr}P_j\), \(\sum_j d_j=d\)).

There are two closely related “thermal” algebras you might mean; it’s useful to separate them.

Thermal algebra: functions of energy only

Define the commutative *-subalgebra \[ \mathcal B_H := \{f(H): f:\{E_1,\dots,E_m\}\to\mathbb C\} = \left\{\sum_{j=1}^m c_j P_j : c_j\in\mathbb C\right\}. \] This algebra captures only which energy level you are in, not which microstate inside a degenerate eigenspace.

Block-diagonal algebra: allows “within-energy” operators

Define the larger subalgebra \[ \mathcal C_H := \{X\in\mathcal A : [X,H]=0\} = \bigoplus_{j=1}^m \mathcal B(P_j\mathcal H). \] This captures all observables that do not mix different energies, including structure inside each degenerate energy subspace.

For “thermal coarse‑graining” in the statistical mechanics sense (energy is the macroscopic constraint, microstructure within shells is ignored), \(\mathcal B_H\) is the right abstraction.

2) The forgetting map as a conditional expectation

GAP wants the “projection” to be an actual map \(E:\mathcal A\to\mathcal B\) that:

Dephasing onto the energy blocks

A canonical conditional expectation onto \(\mathcal C_H\) is \[ E_{\mathcal C}(X) := \sum_{j=1}^m P_j X P_j. \] This deletes all off-diagonal energy coherences.

Compressing further to “energy only”

A canonical conditional expectation from \(\mathcal A\) onto \(\mathcal B_H\) is \[ E_{\mathcal B}(X) := \sum_{j=1}^m \frac{\mathrm{Tr}(P_j X)}{d_j}\, P_j. \] Interpretation: within each energy shell, replace \(X\) by its microcanonical average (a scalar multiple of \(P_j\)).

These are the precise “coarse‑graining” maps in the finite-dimensional setting: they implement the equivalence relation “indistinguishable by \(\mathcal B_H\).”

3) What “keeping only energy information” means for states

Given a microscopic state \(\rho\), the only data accessible to \(\mathcal B_H\) is the vector of level probabilities \[ p_j := \mathrm{Tr}(\rho P_j),\qquad p_j\ge 0,\ \sum_j p_j=1. \] This is exactly the restriction of the state to \(\mathcal B_H\), because any \(B\in\mathcal B_H\) has the form \(B=\sum_j c_jP_j\) and \[ \mathrm{Tr}(\rho B)=\sum_j c_j\, \mathrm{Tr}(\rho P_j)=\sum_j c_j p_j. \]

A natural “thermalized given only \(p_j\)” state is the block-maximally-mixed state \[ \bar\rho_{\mathcal B} := E_{\mathcal B}(\rho) = \sum_{j=1}^m \frac{p_j}{d_j} P_j. \] This is already a MaxEnt completion given the full constraint “the entire distribution over energies is known.” It is the most mixed state consistent with those \(p_j\) values.

4) MaxEnt with only the mean energy gives the Gibbs state

In many thermodynamic situations you keep even less: only the mean energy \[ E := \mathrm{Tr}(\rho H) \] (and normalization). Then the MaxEnt problem is: \[ \max_{\sigma} S(\sigma)\quad \text{s.t.}\quad \mathrm{Tr}(\sigma)=1,\ \mathrm{Tr}(\sigma H)=E. \] Solution

The maximizer is the Gibbs state \[ \rho_\beta := \frac{e^{-\beta H}}{Z(\beta)},\qquad Z(\beta):=\mathrm{Tr}(e^{-\beta H}), \] with \(\beta\) chosen so that \(\mathrm{Tr}(\rho_\beta H)=E\).

This is the exact sense in which “thermal equilibrium” is the least-committal state consistent with energy information.

Two levels of thermal coarse‑graining

GAP treats both as the same template: constraints define an observable subalgebra (or constraint set), and entropy certifies the MaxEnt closure.

5) The entropy monotone on observable algebras

Define the description entropy for the “energy-only” viewpoint as \[ S_{\mathcal B_H}(\rho) := S(\tilde\rho_{\mathcal B_H}), \] where \(\tilde\rho_{\mathcal B_H}\) is the MaxEnt state consistent with the chosen constraints. Concretely:

Monotonicity statement

If you refine the accessible algebra (you track more observables), entropy cannot increase.

Formally, if \(\mathcal B_1\subseteq \mathcal B_2\subseteq \mathcal A\), then \[ S_{\mathcal B_1}(\rho)\ \ge\ S_{\mathcal B_2}(\rho). \] Reason (conceptual, not a proof): \(\mathcal B_2\) imposes more constraints, so the feasible set of \(\sigma\)’s consistent with \(\rho|_{\mathcal B_2}\) is smaller; maximizing entropy over a smaller set cannot yield a larger maximum.

This is the backbone of “entropy is a monotone of description.”

6) Where thermodynamic irreversibility enters (without violating unitarity)

Microscopically, closed dynamics is unitary: \[ \rho(t)=U_t\rho(0)U_t^\dagger,\qquad S(\rho(t))=S(\rho(0)). \] Thermodynamics appears when you repeatedly do description update steps:

The “arrow” is not in \(U_t\). It’s in the repeated act of discarding distinctions and then re-extending by MaxEnt. GAP’s claim is that this is the same skeleton you will later recognize in decoherence and horizons.

7) A concrete worked micro-example (2-level system)

Let \(H = 0\cdot |0\rangle\langle 0| + \Delta \cdot |1\rangle\langle 1|\). Any state has mean energy \[ E = \mathrm{Tr}(\rho H)=\Delta\, \rho_{11}. \] MaxEnt with constraint \(E\) gives \[ \rho_\beta = \frac{1}{1+e^{-\beta \Delta}} \begin{pmatrix} 1 & 0\\ 0 & e^{-\beta \Delta} \end{pmatrix}, \] and \(\beta\) is fixed by \(\rho_{\beta,11}=E/\Delta\).

Notice what happened: regardless of any initial coherence \(\rho_{01}\), the thermal description deletes it because it is not an energy observable in \(\mathcal B_H\). That’s the finite-dimensional, algebraic meaning of “thermal coarse‑graining.”

Next step inside Option A

If you’re aligned, the tight continuation is to prove/record two “GAP lemmas” in this finite setting:

Tell me whether you want the next chunk to be (i) Lagrange-multiplier derivation of Gibbs or (ii) relative entropy / data-processing as the master monotonicity principle.

Patrick:

Let's go with Lagrange muliplier.

Copilot:

MaxEnt with Lagrange multipliers in finite-dimensional quantum systems

Let \(\mathcal H\) be \(d\)-dimensional, \(\rho\) a density matrix (\(\rho\ge 0\), \(\mathrm{Tr}\rho=1\)), and von Neumann entropy \[ S(\rho)=-\mathrm{Tr}(\rho\log\rho). \] We’ll solve: maximize \(S(\rho)\) subject to linear constraints on expectation values.

The general MaxEnt problem

Fix Hermitian observables \(O_1,\dots,O_k\) and target values \(c_1,\dots,c_k\). Consider \[ \max_{\rho}\ S(\rho)\quad \text{s.t.}\quad \mathrm{Tr}(\rho)=1,\ \mathrm{Tr}(\rho O_i)=c_i\ (i=1,\dots,k). \] Lagrangian

Introduce multipliers \(\alpha\in\mathbb R\) (normalization) and \(\lambda_1,\dots,\lambda_k\in\mathbb R\) (constraints). Define \[ \mathcal L(\rho,\alpha,\lambda) = -\mathrm{Tr}(\rho\log\rho) -\alpha\big(\mathrm{Tr}(\rho)-1\big) -\sum_{i=1}^k \lambda_i\big(\mathrm{Tr}(\rho O_i)-c_i\big). \]

The key variational derivative

Take a small Hermitian perturbation \(\delta\rho\) with \(\mathrm{Tr}(\delta\rho)=0\) (we’ll enforce normalization via \(\alpha\) anyway). Use the standard functional variation \[ \delta\,\mathrm{Tr}(\rho\log\rho)=\mathrm{Tr}\big(\delta\rho(\log\rho+I)\big), \] so \[ \delta S(\rho) = -\mathrm{Tr}\big(\delta\rho(\log\rho+I)\big). \] Also, \[ \delta\,\mathrm{Tr}(\rho O_i)=\mathrm{Tr}(\delta\rho\, O_i),\qquad \delta\,\mathrm{Tr}(\rho)=\mathrm{Tr}(\delta\rho). \] Thus \[ \delta\mathcal L = -\mathrm{Tr}\big(\delta\rho(\log\rho+I)\big) -\alpha\,\mathrm{Tr}(\delta\rho) -\sum_{i=1}^k \lambda_i\,\mathrm{Tr}(\delta\rho\,O_i). \] Factor out \(\delta\rho\): \[ \delta\mathcal L = -\mathrm{Tr}\left(\delta\rho\left(\log\rho+I+\alpha I+\sum_{i=1}^k \lambda_i O_i\right)\right). \]

For a stationary point, \(\delta\mathcal L=0\) for all Hermitian \(\delta\rho\), hence the operator in parentheses must vanish: \[ \log\rho + I + \alpha I + \sum_{i=1}^k \lambda_i O_i = 0. \]

Rearrange: \[ \log\rho = -(\alpha+1)I - \sum_{i=1}^k \lambda_i O_i. \]

Exponentiate: \[ \rho = \exp\!\left(-(\alpha+1)I - \sum_{i=1}^k \lambda_i O_i\right) = \frac{1}{Z}\exp\!\left(-\sum_{i=1}^k \lambda_i O_i\right), \] where the partition function \(Z\) enforces normalization: \[ Z := \mathrm{Tr}\left(\exp\!\left(-\sum_{i=1}^k \lambda_i O_i\right)\right). \]

What you just proved

The MaxEnt state consistent with linear constraints is always of generalized Gibbs form: \[ \rho_{\lambda} = \frac{e^{-\sum_i \lambda_i O_i}}{\mathrm{Tr}(e^{-\sum_i \lambda_i O_i})}. \] This is the finite-dimensional algebraic heart of “thermalization by MaxEnt.”

Specialization: thermal Gibbs state from mean energy

Take one observable \(O_1=H\) and one constraint \(\mathrm{Tr}(\rho H)=E\). Then \[ \rho_\beta = \frac{e^{-\beta H}}{Z(\beta)},\qquad Z(\beta)=\mathrm{Tr}(e^{-\beta H}), \] with \(\beta\) chosen so that \(\mathrm{Tr}(\rho_\beta H)=E\).

How \(\beta\) is fixed

Differentiate \(\log Z(\beta)\): \[ \frac{d}{d\beta} Z(\beta) = \mathrm{Tr}(-H e^{-\beta H}), \] so \[ \frac{d}{d\beta}\log Z(\beta) = \frac{1}{Z(\beta)}\mathrm{Tr}(-H e^{-\beta H}) = -\mathrm{Tr}(\rho_\beta H) = -\langle H\rangle_{\rho_\beta}. \] Thus the map \(\beta \mapsto \langle H\rangle_{\rho_\beta}\) is obtained from \(Z\) and you solve \(\langle H\rangle_{\rho_\beta}=E\) for \(\beta\).

Why this solution is the maximizer, not just a stationary point

Two finite-dimensional facts complete the story:

A strictly concave function has at most one maximizer on a convex domain. So the generalized Gibbs form you derived is not merely “a candidate”: it is the unique maximum entropy state (when the constraints are feasible and the maximizer is full-rank).

GAP translation: what this buys you

In GAP terms, the Lagrange-multiplier derivation formalizes:

So “thermal coarse‑graining” is not a physical scrambling claim; it’s the inferential closure step that converts a restricted algebra of facts into a canonical state.

Next step

To connect this directly to “entropy as a monotone on observable algebras,” the clean next move is:

If you tell me what you want as the next “thermal module” constraint set—just \(H\), or \(H\) plus \(N\), or local energy densities—I’ll write the monotonicity statement in the same formal style.

Patrick: Let's go with H + N, and then call it a day, and I'll start setting this chat up in HTML.

Copilot:

MaxEnt with constraints \(H + N\) in finite dimensions

Let \(\mathcal H\) be finite-dimensional and \(\mathcal A=\mathcal B(\mathcal H)\). Fix Hermitian operators:

Lagrange-multiplier solution: the grand canonical state

Form the Lagrangian with multipliers \(\alpha,\beta,\gamma\): \[ \mathcal L(\rho,\alpha,\beta,\gamma) = -\mathrm{Tr}(\rho\log\rho) -\alpha(\mathrm{Tr}\rho-1) -\beta(\mathrm{Tr}(\rho H)-E) -\gamma(\mathrm{Tr}(\rho N)-\bar N). \]

Stationarity with respect to \(\rho\) gives \[ \log\rho = -(\alpha+1)I - \beta H - \gamma N, \] so \[ \rho_{\beta,\gamma} = \frac{e^{-\beta H-\gamma N}}{Z(\beta,\gamma)}, \qquad Z(\beta,\gamma)=\mathrm{Tr}\!\left(e^{-\beta H-\gamma N}\right). \]

It’s conventional to write \(\gamma=-\beta\mu\), yielding the familiar grand canonical form \[ \rho_{\beta,\mu} = \frac{e^{-\beta(H-\mu N)}}{\Xi(\beta,\mu)}, \qquad \Xi(\beta,\mu)=\mathrm{Tr}\!\left(e^{-\beta(H-\mu N)}\right). \]

No assumption that \([H,N]=0\) is needed to write this state; the exponential is well-defined regardless.

Partition function derivatives that pin down the constraints

Define \(\Xi(\beta,\mu)=\mathrm{Tr}(e^{-\beta(H-\mu N)})\). Then:

Given \((E,\bar N)\) in the feasible range, these relations implicitly determine \((\beta,\mu)\).

GAP monotonicity: adding \(N\) refines the description, so entropy drops

Let:

Then \(\mathcal C_{H,N}\subseteq \mathcal C_H\) (strictly, unless the \(N\)-constraint is redundant). Maximizing a concave functional over a smaller feasible set cannot increase the maximum, hence \[ \max_{\rho\in \mathcal C_{H,N}} S(\rho)\ \le\ \max_{\rho\in \mathcal C_H} S(\rho). \] This is the clean “entropy as a monotone on descriptions” statement in this thermal setting:

Observable-algebra viewpoint for \(H+N\)

If \([H,N]=0\), then \(H\) and \(N\) are simultaneously block-diagonalizable, and the “thermodynamic facts” algebra is the commutative algebra \[ \mathcal B_{H,N} = \{ f(H,N)\}, \] i.e. generated by their joint spectral projectors. Refining from \(\mathcal B_H\) to \(\mathcal B_{H,N}\) is literally a refinement of the partition of microstates into equivalence classes—so the monotonicity above becomes a statement about smaller equivalence classes.

If \([H,N]\neq 0\), the constraint set is still perfectly valid (MaxEnt still returns \(\rho_{\beta,\mu}\)), but the “facts” are no longer a purely classical joint spectrum. GAP-wise, it’s the first place you can feel the noncommutative nature of “keeping constraints” rather than “keeping a classical macrostate.”

Minimal takeaway for your HTML write-up

If you paste your HTML draft and tell me your preferred notation conventions (e.g., \(Z\) vs \(\Xi\), \(\bar N\) vs \(N_0\)), I’ll help you make it clean and internally consistent.

Patrick:

Follow-up:

You said: Quantum information is the set of distinctions preserved under unitary evolution of a quantum state.

That still seems vague. So vague, that one might be tempted to reason (falsely) that

The unitary evolution of a quantum state is that kind of operation on a Hilbert space that preserves quantum information.

Of course this is circular reasoning, but why specifically is it wrong as a definition?

Copilot:

You’re right to press on this, because the phrase “quantum information is what unitary evolution preserves” sounds dangerously close to a tautology. The reason it isn’t a definition—and why it would be wrong to treat it as one—comes down to what is defined first, and what is derived.

1️⃣ Why the circular definition is tempting

The tempting (but wrong) chain goes like this:

That collapses into a vacuous statement: “unitary evolution preserves what it preserves.” Nothing has been specified.

The problem is not just logical circularity—it’s that unitarity is not defined in informational terms at all.

2️⃣ What unitarity is actually defined by

Unitary operators are defined before any talk of information.

A linear operator \(U\) on a Hilbert space is unitary if and only if: \[ U^\dagger U = UU^\dagger = I. \]

Equivalently—and this is crucial—unitaries are exactly the maps that:

None of these statements mention “information.” They are geometric and algebraic facts about Hilbert space.

So unitarity is a kinematic symmetry, not an informational principle.

3️⃣ What “quantum information” refers to (non-circularly)

Once unitarity is fixed, then we can ask what structures it preserves.

A precise, non-circular characterization is:

Quantum information consists of all distinctions encoded in a quantum state that are invariant under unitary equivalence.Concretely, this includes:

These are structural features of the state, not labels or bits.

Unitary evolution preserves them because it is a change of basis in Hilbert space, not because it was defined to preserve “information.”

4️⃣ Why “information” is derivative, not primitive

Here’s the key asymmetry that breaks the circularity:

This mirrors classical mechanics:

In both cases, information is a derived invariant, not a defining axiom.

5️⃣ Why the circular definition fails physically

If you tried to define unitarity as “whatever preserves quantum information,” you would immediately run into ambiguity:

So which of these would count as “unitary” under that definition?

Without an independent definition of unitarity, the phrase “preserves information” has no content.

6️⃣ GAP’s sharper formulation

In GAP language, the clean statement is:

Quantum information is the fine-grained distinguishability structure of a state prior to projection onto a restricted observable algebra.

So information loss is never dynamical—it is descriptive.

7️⃣ The one-sentence resolution

The circular definition fails because unitarity is defined independently of information, while information is defined as the set of distinctions invariant under that independently defined structure.

Unitarity comes first. Information is what survives it.

That asymmetry is exactly what makes the concept non-vacuous—and exactly why your instinct to challenge the phrasing was right.

Afterword: