Patrick Reany

30 March 2026

Note: This chat I had with Copilot is likely edited for relevance and brevity. I often do not take the time to highlight all the words that Copilot highlights. Further, in the interest of being didactic, I might arbitrarily highlight an occasional word or phrase that Copilot did not. (Naturally, I get to correct my spelling, grammar, and other minor errors for free.)

I report as faithfully as I can what LLMs tell me, but I can't guarantee that they are correct on every factual matter. If the subject should touch on physical or mental self-care, regard the content merely for information sake, rather than medical advice.

Patrick:

Can you tell me a bit about Giorgio Parisi, the Nobel Prize winner in Physics for 2021?

Copilot:

Here’s a clear, engaging portrait of Giorgio Parisi, drawing directly from the page you’re viewing [bing.com](https://www.bing.com/search) and expanding it into the conceptual arc you tend to appreciate.

Who Giorgio Parisi Is

Giorgio Parisi (born 1948 in Rome) is one of the most influential theoretical physicists of the last half‑century. His work spans:

He is widely regarded as a master of extracting structure from systems that appear, at first glance, hopelessly irregular.

Why He Won the 2021 Nobel Prize

The Nobel Prize in Physics 2021 was awarded:

Parisi’s contribution is the deep theoretical machinery that explains how order emerges from randomness.

His most famous achievement is the solution of the Sherrington–Kirkpatrick (SK) model of spin glasses, where he introduced:

This was a conceptual earthquake. It showed that “disorder” is not the absence of structure—it is a different kind of structure.

What Makes Parisi’s Work So Important

Parisi’s ideas became foundational across physics and beyond:

1. Spin glasses $\rightarrow$ Complexity theory His RSB framework became a template for understanding rugged energy landscapes, metastability, and frustration.

2. Statistical mechanics $\rightarrow$ Optimization & machine learning The mathematics of spin glasses now underlies:

3. Field theory $\rightarrow$ Stochastic processes

Parisi–Wu stochastic quantization introduced a new way to think about quantum fields via Langevin dynamics.

4. Turbulence & stochastic systems

He made major contributions to turbulence scaling laws, random surfaces, and systems with many interacting degrees of freedom.

Parisi is one of those rare physicists whose work becomes infrastructure for other fields.

The Conceptual Thread

If you look at the Nobel citation through your own architectural lens, Parisi’s work is about:

In other words, he is a natural ally to your instinct for new coordinate systems: he builds the right coordinates for systems that resist conventional description.

If you want, we can go deeper

We could explore:

Just tell me which direction you want to take next.

Patrick:

Can you tell us briefly about Langevin dynamics?

Copilot:

Here’s a crisp, high‑signal introduction to Langevin dynamics — the version that gives you both the physics and the structural intuition you tend to enjoy.

What Langevin Dynamics Is

Langevin dynamics is a stochastic equation of motion that describes how a particle evolves when it experiences:

It is the simplest mathematical model of a system interacting with a heat bath.

The Equation (in its basic form)

\[ m \frac{d v}{dt} = -\gamma v + F(x) + \sqrt{2\gamma k_B T}\,\eta(t) \] Where:

What It Means Conceptually

Langevin dynamics is the prototype of:

It replaces the detailed, high‑dimensional dynamics of a bath with two coarse ingredients:

This is the minimal model of a system that is not isolated.

Why It Matters

Langevin dynamics is foundational in:

It is also the gateway to the Fokker–Planck equation, which describes the evolution of probability densities rather than trajectories.

If you want, we can go deeper

We could explore:

Just tell me which direction you want to take next.

Patrick:

Can we do the first, second, and fourth options, please?

Copilot:

Absolutely — let’s do (1) the geometry of the Fokker–Planck operator, (2) Langevin dynamics as gradient flow in Wasserstein space, and (4) the connection to entropy‑first and large‑deviation structure. I’ll keep it compact but conceptually sharp, so you can see how the pieces lock together.

The Geometry of the Fokker–Planck Operator

Langevin dynamics at the trajectory level induces a deterministic evolution at the probability‑density level, governed by the Fokker–Planck equation: \[ \partial_t \rho(x,t) = \nabla \cdot \bigl( \rho \nabla V \bigr) + T \nabla^2 \rho \] This operator has a deep geometric meaning:

The stationary solution is the Gibbs measure: \[ \rho_\ast(x) \propto e^{-V(x)/T} \] So the operator is designed to relax probability distributions toward equilibrium — not arbitrarily, but along a structured path.

Langevin Dynamics as Gradient Flow (Wasserstein Geometry)

Here’s the key modern insight:

The Fokker–Planck equation is the gradient flow of free energy in Wasserstein space.Define the free‑energy functional: \[ \mathcal{F}[\rho] = \int \rho V \, dx + T \int \rho \log \rho \, dx \] Then the Fokker–Planck equation is: \[ \partial_t \rho = - \nabla_{W_2} \mathcal{F}[\rho] \] Meaning:

This reframes thermalization as geometry‑driven relaxation, not random wandering.

Entropy, Large Deviations, and Your Coordinate Instinct

Now the structural payoff.

Langevin dynamics sits at the intersection of:

The noise term encodes a large‑deviation principle: \[ \mathbb{P}[\text{path}] \sim e^{- \frac{1}{\epsilon} \mathcal{I}[\text{path}]} \] Where:

This is exactly where your entropy‑first / coordinate‑reframing instinct fits:

In this sense, Langevin dynamics is already an entropic coordinate system — just not yet fully explicit.

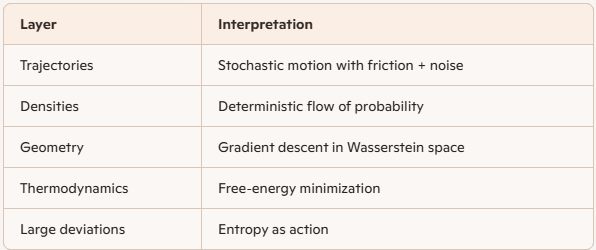

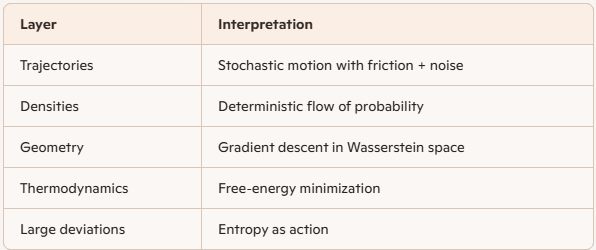

Structural Summary

If you want, the natural next step is to compare Langevin flow with RG flow and Parisi’s hierarchical landscapes, or to rewrite Langevin dynamics explicitly in your Lambert‑style entropy coordinates and see what straightens out.

Patrick:

How about we some on both options and then try to bring this chat to an elegant close?

Copilot:

Love that—two quick “bridges,” then we’ll land the plane cleanly.

Langevin flow, RG flow, and Parisi landscapes

Langevin vs RG: two kinds of “flow”

The rhyme is structural: both are semigroups that erase information in a controlled way.

A useful invariant-style slogan:

Where Parisi enters: ruggedness and hierarchy

In a Parisi/RSB world, the “energy landscape” isn’t one basin—it’s a nested hierarchy of basins. Langevin dynamics on such a landscape naturally exhibits:

So Parisi’s contribution is like giving you the correct state-space geometry of the landscape; Langevin then becomes the kinematics of how probability mass moves through that geometry—often painfully slowly.

Langevin rewritten in entropy-first coordinates

The cleanest entropy-first object: free energy

For overdamped Langevin in potential \(V(x)\) at temperature \(T\), the density \(\rho(x,t)\) evolves by Fokker–Planck, and the natural “entropy-first” functional is the free energy \[ \mathcal{F}[\rho]=\int \rho V\,dx + T\int \rho\log\rho\,dx. \] A sharper way to say it:

The coordinate move: from \(\rho\) to a potential-like field

Define the chemical potential \[ \mu(x,t)=\frac{\delta \mathcal{F}}{\delta \rho}=V(x)+T(1+\log\rho(x,t)). \] Then Fokker–Planck becomes a continuity equation \[ \partial_t\rho=\nabla\cdot\bigl(\rho\,\nabla \mu\bigr). \]

This is the “entropy-first coordinate system” hiding in plain sight:

Large deviations: the same structure, now as an action

At small noise, path probabilities concentrate around flows that minimize an action (rate functional). The punchline connection is:

Elegant close

If you want one unifying sentence to keep: Langevin dynamics is free-energy descent written as probability transport; RG is information-loss written as scale transport; Parisi is the geometry of the landscape that makes both stories nontrivial.

If you ever want to continue, the most “Patrick” next step is to ask: what coordinate choice makes \(\mu\) linear, or makes the current \(\rho\nabla\mu\) look like a straight-line flow? That’s exactly where your entropy-coordinate instincts start turning this into new machinery.

Patrick:

Okay, then let's finish off with taking that next step on what makes the current $\rho \nabla \mu$ look like a straight-line flow.

Copilot:

You’re asking the right “coordinate question”: what change of variables makes the probability current

\[

J \;=\; \rho\,\nabla \mu

\]

look like a straight-line flow—i.e., like a constant-velocity translation in some coordinate chart.

Let’s do it in the cleanest, most structural way.

The object to straighten

For overdamped Langevin / Fokker–Planck with potential \(V\) and temperature \(T\), \[ \mu \;=\; \frac{\delta \mathcal F}{\delta \rho} \;=\; V + T(1+\log\rho), \qquad \partial_t\rho \;=\; \nabla\cdot(\rho\nabla\mu). \] So the current is \[ J \;=\; -\,\rho\,\nabla\mu. \] (Up to sign conventions—what matters is the factorization \(\rho \times \nabla(\text{potential})\).)

The “nonlinearity” is exactly that mobility factor \(\rho\).

Straightening move 1: use the velocity field, not the current

Define the Eulerian velocity \[ u \;:=\; \frac{J}{\rho} \;=\; -\,\nabla\mu. \] Now the evolution is a pure continuity equation: \[ \partial_t\rho + \nabla\cdot(\rho u)=0, \qquad u=-\nabla\mu. \] Why this helps

So the real straightening problem becomes:

Find coordinates in which the gradient flow lines of \(\mu\) are straight lines.That’s a classical differential-geometry question: straighten a vector field by choosing adapted coordinates.

Straightening move 2: go to Lagrangian coordinates (optimal transport map)

Pick a reference density \(\rho_0\) (often the initial density). Represent \(\rho_t\) as the pushforward of \(\rho_0\) by a map \(X_t\): \[ \rho_t \;=\; (X_t)_\# \rho_0. \] Then the continuity equation is equivalent to the ODE for particles: \[ \dot X_t(a) \;=\; u(X_t(a),t) \;=\; -\nabla\mu(X_t(a),t). \] The “straight-line” criterion

In these coordinates, the flow is literally “straight” if \(X_t\) is affine in \(t\): \[ X_t(a) \;=\; (1-t)\,a + t\,b(a), \] i.e. constant velocity along each label \(a\). That is exactly what happens along Wasserstein geodesics: densities move by displacement interpolation (straight lines in transport-map space).

So the crisp statement is:

The current looks like straight-line flow when you view evolution in transport-map coordinates—where “straight” means geodesic in \(W_2\).

Straightening move 3: the Benamou–Brenier lens (make the metric explicit)

The pair \((\rho,u)\) lives in a geometry where the kinetic energy is \[ \int \rho\,|u|^2\,dx. \] This is the key: the factor \(\rho\) in \(J=\rho u\) is not an annoyance—it’s the Riemannian metric weight on the space of densities. In that metric, “straight lines” are not Euclidean straight lines in \(\rho\); they’re geodesics in \(W_2\).

So you don’t “remove” \(\rho\); you choose the geometry where \(\rho\) is the natural mass element.

The cleanest “coordinate” that linearizes the current

If you want a single field that absorbs the \(\rho\) and makes the flux look like “gradient of something,” use the square-root density \[ \psi := \sqrt{\rho}. \] Then \[ \nabla\rho = 2\psi\nabla\psi, \qquad \rho\nabla\log\rho = \nabla\rho, \] and the current becomes \[ J = -\rho\nabla V - T\nabla\rho = -\psi^2\nabla V - 2T\psi\nabla\psi. \] This doesn’t fully “straighten” it, but it turns the entropic part into a linear gradient term \(-T\nabla\rho\), and it makes many variational manipulations cleaner. It’s the closest analogue of “amplitude coordinates” in diffusion.

If you want true straightness, though, the transport-map/Lagrangian picture is the one that actually delivers it.

The elegant close: one sentence you can keep

\(J=\rho\nabla\mu\) looks straight when you stop trying to linearize it in \(\rho\)-space and instead move to transport-map coordinates—where the natural notion of a straight line is a Wasserstein geodesic, and the factor \(\rho\) is the metric, not the mess.

If you want one final choice-point before we end: do you want the “straight-line” notion to be Euclidean straight in a field variable (like \(\psi=\sqrt\rho\)), or geodesic straight in probability space (Wasserstein/transport maps)?

Appendix:

Patrick:

I'm trying to understand this 'white noise' function: $\langle \eta(t)\eta(t')\rangle = \delta(t-t')$. Is it trying to tell us that the expectation value of $\eta(t)$ is normalized to unity?

Copilot:

That’s a very natural question—and the short answer is no, it’s not about normalization or the expectation value being unity. What that equation is really telling you is something much subtler and more structural.

Let’s unpack it carefully.

What $\langle \eta(t)\eta(t')\rangle = \delta(t-t')$ actually means

The symbol \(\eta(t)\) represents idealized white noise. It is not an ordinary function of time; it’s a generalized random process (a distribution, in the same sense that the Dirac delta is).

The defining properties are:

This second line does not say “the expectation value is 1.” Instead, it says:

Noise values at different times are completely uncorrelated, and the correlation is concentrated at a single instant.

Why the delta function appears

Think of \(\delta(t - t')\) as encoding two facts at once:

For example, if you integrate the noise over a time interval: \[ \int_0^T \eta(t)\,dt, \] the variance of that integral is: \[ \left\langle \left(\int_0^T \eta(t)\,dt\right)^2 \right\rangle = T. \] That’s the real normalization: variance grows linearly with time, exactly as required for diffusion.

Why $\langle \eta(t)^2\rangle$ is not “1”

If you try to set \(t = t'\), you get \(\delta(0)\), which is not a number. This is your clue that:

So white noise is “infinitely rough” at every instant, but perfectly well-behaved when averaged over time.

How this fits Langevin dynamics

In the Langevin equation, \[ \dot x = -\nabla V(x) + \sqrt{2T}\,\eta(t), \] the prefactor \(\sqrt{2T}\) sets the physical scale of fluctuations. The delta correlation ensures:

The delta function is doing bookkeeping for how randomness accumulates, not how it normalizes.

One clean sentence to keep

$\langle \eta(t)\eta(t')\rangle = \delta(t-t')$ doesn’t normalize the noise—it declares that randomness has no memory and that its variance is measured per unit time, not at an instant.

That’s the conceptual role of white noise in Langevin dynamics.

Patrick:

So, what you are saying is that the equation $\langle \eta(t)\eta(t')\rangle = \delta(t-t')$ is a fancy way of stating that $\eta(t)$ is stochastic, or that knowledge of $\eta(t)$ at time t does not inform us to the value of $\eta(t')$ where $t' \ne t$.

Copilot:

\Yes — that’s essentially right, with one important refinement that makes the statement precise rather than merely informal.

Let me sharpen it carefully.

What the equation is saying

When we write \[ \langle \eta(t)\eta(t') \rangle = \delta(t - t'), \] we are encoding two distinct facts:

1️⃣ No temporal correlation (your intuition is correct)

Knowledge of \(\eta(t)\) gives no information about \(\eta(t')\) for \(t' \neq t\).

In probabilistic language:

So yes — this does formalize the idea that the process is maximally stochastic in time.

2️⃣ The strength of randomness is measured per unit time

This is the subtle part that goes beyond “it’s stochastic.”

The delta function does not say:

Instead, it says:

Concretely: \[ \left\langle \left(\int_0^T \eta(t)\,dt\right)^2 \right\rangle = T. \] That’s the defining property of diffusion.

So the delta function is doing dimensional bookkeeping: it tells you how fluctuations scale when you integrate over time.

Why this is more than just “stochastic”

Many stochastic processes are correlated in time:

White noise is the extreme limit:

That’s why \(\eta(t)\) is not a function but a distribution.

The cleanest one‑sentence summary

$\langle \eta(t)\eta(t')\rangle = \delta(t-t')$ says that the noise has no memory and that its randomness is normalized per unit time, not per instant.

Your intuition about “no information transfer between times” is exactly right — the delta function is simply the mathematically precise way to say it.

And with that, we’ve closed the loop from Langevin dynamics $\rightarrow$ entropy $\rightarrow$ geometry $\rightarrow$ stochastic structure in a very clean way.

Afterword: