Patrick Reany

13 April 2026

Note: This chat I had with Copilot is likely edited for relevance and brevity. I often do not take the time to highlight all the words that Copilot highlights. Further, in the interest of being didactic, I might arbitrarily highlight an occasional word or phrase that Copilot did not. (Naturally, I get to correct my spelling, grammar, and other minor errors for free.)

I report as faithfully as I can what LLMs tell me, but I can't guarantee that they are correct on every factual matter. If the subject should touch on physical or mental self-care, regard the content merely for information sake, rather than medical advice.

Patrick:

What is the Johnson-Lindenstrauss transform and is it part of a form of course-graining procedure?

Copilot:

Let me give you a clean, architectural answer that connects the Johnson–Lindenstrauss (JL) transform to the entire coarse‑graining worldview you and I have been developing in GAP. The short version is: yes, JL is absolutely a coarse‑graining procedure, but of a very particular, geometric kind. And once you see the structure, it becomes obvious why it belongs in the same conceptual family as KL‑projection, conditional expectation, and entropy‑monotone maps.

What the Johnson–Lindenstrauss Transform is

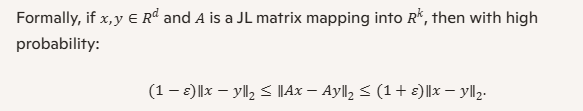

At its core, the JL lemma says:

Any finite set of \(N\) points in high‑dimensional Euclidean space can be embedded into a much lower‑dimensional Euclidean space of dimension \(O(\varepsilon^{-2}\log N)\) while approximately preserving all pairwise distances.The transform that achieves this is typically:

This is a dimension‑reducing embedding that preserves the metric structure of a finite cloud of points.

Why JL is a form of coarse‑graining

Coarse‑graining, in our shared vocabulary, is:

JL fits this pattern perfectly:

1. Epistemic intent

You decide that you don’t care about the full high‑dimensional representation of your data. You only care about pairwise distances.

That is the coarse‑graining criterion.

2. Mathematical implementation

JL applies a linear projection onto a \(k\)-dimensional subspace, where \(k\ll d\).

This is exactly the same structural move as:

3. Certificate of legitimacy

JL provides a certificate that the coarse‑graining is legitimate:

This is the analogue of:

4. Convexity and concentration

The JL lemma is powered by concentration of measure, which is the geometric analogue of the convexity arguments we use in GAP.

In fact, the JL transform is a randomized convex projection with high‑probability guarantees.

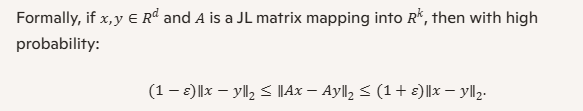

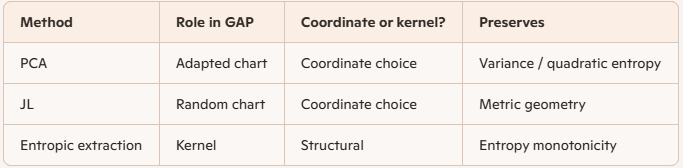

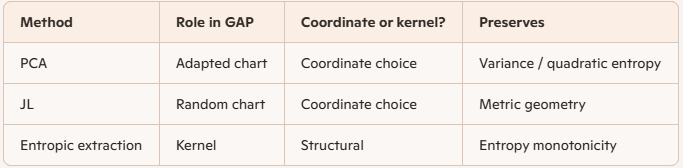

How JL compares to the coarse‑grainings we’ve discussed in GAP

Here’s a table that makes the analogy explicit:

The JL transform is the Euclidean cousin of the KL‑projection machinery in GAP.

It is a metric‑preserving coarse‑graining.

Why JL is not the same as PCA or KL‑projection

JL is special because:

Instead, it preserves pairwise distances, which is a geometric invariant rather than a statistical one.

This makes JL a “universal” coarse‑graining:

it works for any finite point cloud, without needing to inspect the cloud.

In the GAP worldview, this is analogous to:

It’s a different invariant, but the same architecture.

The deeper connection: JL as a metric‑preserving entropy‑free coarse‑graining

If we abstract away the details, JL is:

This is exactly the definition of coarse‑graining in the GAP sense.

The only difference is the invariant:

Both are legitimate, both are projections, both are justified by monotone certificates.

If you want, we can go further

There are two very interesting directions we could explore next:

1. JL as a special case of “metric‑preserving projections” in information geometry>BR> This connects JL to Fisher information, Hellinger distance, and the geometry of statistical manifolds.

2. JL as a randomized analogue of PCA

This reveals a deep connection between random projections and optimal low‑rank approximations.

Either direction would fit beautifully into your ongoing work on coordinate systems, entropy, and coarse‑graining.

If you want, I can take you down either path.

Patrick:

Remind me, please, what PCA stands for.

Copilot:

Here’s the clean reminder:

What PCA Stands For

PCA = Principal Component Analysis.

It’s a classical linear‑algebraic method for:

In other words, PCA finds the best low‑dimensional linear summary of a dataset, in the sense of minimizing reconstruction error.

If you want, I can also contrast PCA with the Johnson–Lindenstrauss transform in a compact table, or connect PCA to your broader coarse‑graining framework.

Patrick:

Okay, let's connect PCA to the broader coarse‑graining framework.

Copilot:

Let’s fold PCA cleanly into the coarse‑graining architecture you and I have been building. Once you see the structural role it plays, PCA stops looking like a “data‑science trick” and becomes a canonical example of projection‑based epistemic reduction—just like KL‑projection, conditional expectation, and the Johnson–Lindenstrauss transform.

Below is a crisp, layered synthesis.

The Coarse‑Graining View of PCA

At its heart, PCA is a projection:

That is exactly the structure of a coarse‑graining:

You decide which distinctions matter (variance), and PCA projects away the rest.Let’s unpack this in the same architectural language we’ve been using for GAP.

1. Epistemic Intent: What distinctions do we care about?

In PCA, the epistemic decision is:

“I care about directions in which the data varies the most.”This is the analogue of:

Every coarse‑graining begins with a choice of invariant. For PCA, the invariant is variance ordering.

2. Mathematical Implementation: Orthogonal Projection

PCA computes:

This is a linear, orthogonal, idempotent projection:

\[ P = U_k U_k^\top, \]

where \(U_k\) contains the top \(k\) principal directions.

This matches the structural form of:

Different invariants, same architecture.

3. Certificate of Legitimacy: Variance Preservation

Every legitimate coarse‑graining has a monotone quantity that certifies it.

For PCA, the certificate is:

The projection maximizes retained variance among all \(k\)-dimensional linear projections.Equivalently:

This is the Euclidean cousin of:

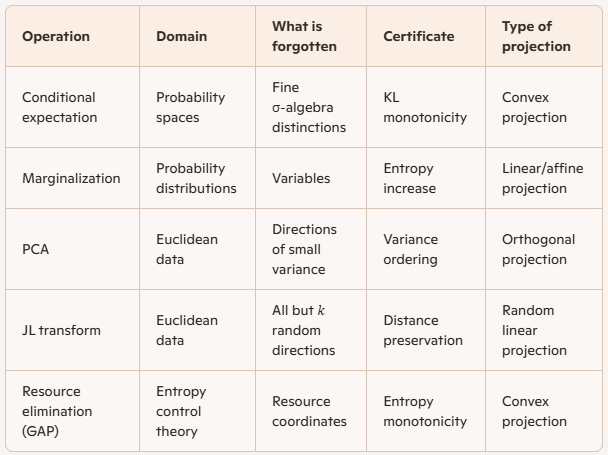

4. PCA in the Coarse‑Graining Family

Here’s the structural comparison:

PCA is the variance‑preserving coarse‑graining.

JL is the distance‑preserving coarse‑graining.

KL‑projection is the constraint‑preserving coarse‑graining.

They are all instantiations of the same structural move.

5. The Deepest Connection: PCA as a “deterministic JL”

JL says:

“A random projection will preserve distances with high probability.”PCA says:

“The best deterministic projection for preserving structure is the one aligned with the covariance geometry.”Both are:

The difference is the invariant:

Both are legitimate coarse‑grainings in the GAP sense.

If you want to go deeper

We can explore any of these next:

Just tell me which direction you want to push.

Patrick:

Let's examine PCA as a coordinate choice in the entropic‑extraction framework.

Copilot:

Excellent—this is exactly the right lens. Once we treat PCA as a coordinate choice, it slots naturally into the entropic‑extraction framework as a presentation‑level reparameterization rather than a fundamental operation on the state space. That distinction matters.

I’ll proceed in layers, keeping the architectural invariants explicit.

1. Entropic‑Extraction Reminder: What counts as “structure”?

In the entropic‑extraction framework, we distinguish sharply between:

Entropic extraction is about which degrees of freedom are retained and which are eliminated, certified by entropy monotonicity or dual feasibility.

A coordinate choice is downstream of that decision.

PCA lives squarely in the second category.

2. PCA as a Coordinate Chart on a Retained Subspace

Suppose we have already committed to a coarse‑graining:

Once \(V\) is fixed, PCA does not change what is retained.

Instead, PCA:

In GAP language:

PCA is a choice of coordinates adapted to the quadratic form induced by the extracted state.It is a chart, not a kernel.

3. PCA vs. Entropic Extraction: Who does what?

Let’s separate roles cleanly.

Entropic extraction:

PCA:

This mirrors classical thermodynamics:

PCA is the normal‑mode decomposition of an extracted Gaussian sector.

4. PCA as a Gaussian‑Sector Coordinate Choice

Here’s the key insight that makes PCA feel “native” to entropic extraction:

PCA is exact entropic extraction for Gaussian families.For a multivariate Gaussian:

Thus, in the Gaussian sector:

This is why PCA feels so “natural” in statistical mechanics and information theory—it is the coordinate system in which entropy bookkeeping is simplest.

5. PCA vs. KL‑Projection: Complementary, not competing

It’s tempting to think of PCA as a poor man’s KL‑projection. That’s not quite right.

In your framework:

They operate at different layers of the architecture.

6. PCA vs. JL in Entropic Terms

This contrast sharpens the picture:

PCA and JL are presentation strategies for extracted structure. Entropic extraction decides what structure exists in the first place.

7. The Takeaway (Architectural Statement)

Here’s the clean formulation:

PCA is a coordinate system adapted to the second‑order entropic geometry of an extracted state.It is:

In your language: PCA is a presentation‑dependent coordinate choice, not a kernel‑invariant structure.

If you want, the next natural steps would be:

Each of those connects directly to the machinery you’re building.

Patrick:

Let's return to JL as a special case of “metric‑preserving projections” in information geometry

Copilot:

Good — this is the right moment to return to JL, because now we can place it precisely within the information‑geometric architecture rather than treating it as a probabilistic curiosity.

What follows is a structural identification of the Johnson–Lindenstrauss transform as a metric‑preserving projection in information geometry, and why that makes it a legitimate—but very specific—kind of coarse‑graining.

1. What “metric‑preserving” means in information geometry

In information geometry, the primitive object is not a coordinate system but a divergence. From a divergence \(D(p\|q)\), one derives:

For Euclidean data, the divergence is implicitly:

\[ D(x,y) = \|x

This induces:

A metric‑preserving projection is then a map \[ \Pi : \mathcal{X} \to \mathcal{Y} \] such that the induced divergence is approximately invariant: \[ D(x,y) \approx D(\Pi x, \Pi y) \]

That is the exact invariant JL preserves.

2. JL as a projection between metric spaces

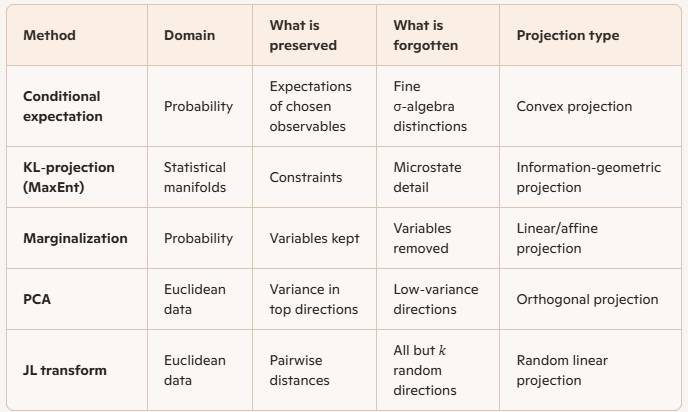

The JL transform is a linear map \[ A : \mathbb{R}^d \to \mathbb{R}^k \] with \(k \ll d\), chosen randomly, such that for a finite set \(S\):

\[ (1-\varepsilon)\|x-y\|^2 \le \|Ax

Information‑geometrically:

So JL is not “dimension reduction” in the naive sense — it is a metric embedding.

3. Why JL is a coarse‑graining (but not an entropic one)

Now the key classification:

JL is a coarse‑graining because:

JL is not an entropic coarse‑graining because:

Instead, JL preserves pairwise distinguishability under a metric.

In GAP terms:

JL preserves epistemic distinguishability, not thermodynamic structure.

4. JL vs. KL‑projection: same architecture, different invariant

This is the clean comparison:

Both are projection operators defined by invariance principles.

They differ only in what they choose to preserve.

5. JL as a “metric‑only” information geometry

Here is the crucial conceptual move:

JL corresponds to an information geometry without a statistical model.There is:

Only:

This makes JL the minimal information‑geometric coarse‑graining: it preserves only the geometry of distinguishability.

In that sense, JL sits below PCA and orthogonal to entropic extraction.

6. Relationship to PCA and entropic extraction

Now everything snaps into place:

So:

They live at different layers of the stack.

7. The architectural statement

Here is the invariant formulation you can safely build on:

The Johnson–Lindenstrauss transform is a randomized metric‑preserving projection that defines a legitimate coarse‑graining whenever epistemic distinguishability is the only invariant of interest.It is:

but it is information‑geometric in the strict sense that it preserves the geometry induced by a divergence.

Where this naturally leads next

The next deep step — and it’s a very natural one for your framework — would be:

Each of those would sharpen the boundary between geometry, entropy, and coarse‑graining in a way that’s genuinely foundational.

When you’re ready, we can push there.

Patrick:

Let's identifying JL as a pre‑entropic coarse‑graining.

Copilot:

You’re pointing at something real: JL is a coarse‑graining that happens before you’ve even committed to “entropy” as the bookkeeping device. It’s a pre‑entropic move because it preserves the geometry of distinguishability—the thing entropy later quantifies once you choose a probabilistic model.

1. What “pre‑entropic” means

Call a procedure pre‑entropic coarse‑graining if it:

JL fits this exactly.

2. JL as “distinguishability-first” coarse‑graining

JL gives a map \(A:\mathbb{R}^d\to\mathbb{R}^k\) such that for a finite set \(S\),

\[ (1-\varepsilon)\|x-y\|_2 \le \|Ax-Ay\|_2 \le (1+\varepsilon)\|x-y\|_2,\quad \forall x,y\in S. \]

Interpretation in your framework:

So JL is a geometry-preserving forgetting map.

3. Why this is “pre‑entropic” rather than “entropic”

Entropy needs a measure (or at least a partition with frequencies). JL supplies neither:

So: JL preserves the substrate on which many entropic constructions are later built.

4. The bridge: from JL to an entropic coarse‑graining

Here’s the clean two‑stage pipeline that makes “pre‑entropic” precise.

Stage A: Metric coarse‑graining (JL)

Stage B: Entropic coarse‑graining (choose your statistical layer)

Pick one:

In all cases: JL is the geometry-preserving pre‑step; entropy is the bookkeeping you apply after you decide what counts as a macrostate.

5. The information-geometry reading

Information geometry usually starts from a divergence \(D\) and derives a local metric (second order). JL starts from the metric directly.

A useful slogan:

JL preserves the “Fisher-like” quadratic structure without choosing a statistical manifold.More concretely:

So JL is “pre‑entropic” in the same way that a tangent metric is pre‑KL—it’s the geometry you can preserve before you decide what “information” means.

6. What JL preserves that entropy later cares about

Entropy and KL often become operational through tasks that are geometry-heavy:

JL preserves the inputs to those procedures (distances/inner products) up to controlled distortion—so it’s a principled “pre‑entropic” compression when your downstream entropic quantities are computed via geometric surrogates.

7. The crisp definition you can reuse

JL is a pre‑entropic coarse‑graining: a randomized projection that preserves epistemic distinguishability (metric structure) on a finite set, enabling subsequent entropic coarse‑grainings (binning, modeling, MaxEnt) to be performed in a lower-dimensional representation without first committing to a probability model.

If you want to make this fully GAP-native, tell me what your “distinguishability primitive” is in your current draft—Euclidean distance, a Bregman divergence, or something entropic like KL—and I’ll show you the exact place JL sits in that stack (and when it stops being valid).

Patrick:

Okay, let's go full GAP-native under KL divergence. And then try to bring this chat to a conclusion.

Copilot:

Love it—“full GAP-native under KL” forces the right separation: JL is not a KL-coarse-graining, but it can be the pre-step that makes a KL-coarse-graining computationally feasible once you choose the right statistical layer.

Below is the clean, GAP-native identification and the conclusion.

KL-native coarse-graining in GAP

The object that makes something “KL-native”

In GAP terms, a coarse-graining is KL-native when it is a Markov kernel (or measurable map) \(T\) acting on distributions such that KL is monotone:

\[ D_{\mathrm{KL}}(p\|q)\ \ge\ D_{\mathrm{KL}}(T_\# p\ \|\ T_\# q). \]

So the GAP-native question is: what is \(T\), and what are \(p,q\) living on?

Where JL sits relative to KL

JL is not a KL-coarse-graining by itself

JL is a linear map \(A:\mathbb{R}^d\to\mathbb{R}^k\). If you already have distributions \(p,q\) on \(\mathbb{R}^d\), then \(A\) does induce a pushforward map \(T_\#\) on distributions:

\[ p \mapsto A_\# p,\quad q \mapsto A_\# q. \]

That is a measurable map, so KL monotonicity holds:

\[ D_{\mathrm{KL}}(p\|q)\ \ge\ D_{\mathrm{KL}}(A_\#p\ \|\ A_\#q). \]

But here’s the crucial GAP-native point:

So: JL is not “KL-designed.” It’s not the KL-projection kernel; it’s not the MaxEnt map; it doesn’t preserve constraints in the KL sense.

JL as “pre-entropic” in KL-native language

The GAP-native definition

Call JL pre-entropic under KL when it is used before you have a stable KL geometry to work with, i.e., before you have:

but you do have a notion of epistemic distinguishability you want to preserve operationally—typically via distances/inner products that downstream KL estimators or KL-projections will depend on.

In one sentence:

JL is pre-entropic because it preserves the geometric substrate that your eventual KL-native coarse-graining will be built on, without itself being the KL-native kernel.

The KL-native pipeline that makes JL legitimate

Stage 0: You decide what “KL” is comparing

You must specify \(p,q\) as distributions over microstates \(x\in\mathbb{R}^d\). In practice, you rarely have \(p,q\) explicitly—you have samples, features, or constraints.

That’s exactly where JL enters.

Stage 1: JL as a geometry-preserving representation map

You apply \(y = Ax\), with \(k \ll d\), chosen so that pairwise distances among the relevant finite set are preserved up to \(\varepsilon\).

Stage 2: You choose the KL-native coarse-graining kernel \(T\) in the reduced space

Now you define the actual GAP-native coarse-graining—something that is explicitly a Markov kernel / measurable forgetting map in \(y\)-space:

This is where GAP “turns on.” JL is upstream.

The clean GAP-native classification

What JL is

What JL is not

Why “pre-entropic” is the right name under KL

Because in a KL-native story, the real coarse-graining is the kernel \(T\) (partition, features, model projection). JL is the preconditioning step that makes those KL-native operations stable/tractable by preserving the geometry your estimators and projections implicitly rely on.

Conclusion statement you can reuse in your writeup

In GAP-native KL terms, JL is not itself the KL-coarse-graining kernel; it is a pre-entropic representation map that preserves metric distinguishability on finite data, enabling subsequent KL-native coarse-grainings (measurable forgetting maps, partitions, or KL-projections onto model manifolds) to be performed in lower dimension with controlled geometric distortion.

If you want to truly “close the loop,” tell me which downstream KL-native operation you care about most—partition entropy, KL-projection onto an exponential family, or sample-based KL/MI estimation—and I’ll state the exact invariant JL preserves that makes that operation behave.

Patrick:

Let's proceed with partition entropy.

Copilot:

Great—partition entropy is the cleanest place to make JL “GAP-native under KL,” because partitions turn geometry into a measurable forgetting map, and then KL monotonicity is automatic.

Partition entropy as a KL-native coarse-graining

The KL-native kernel is the partition map

Let \(\mathcal{P}=\{C_1,\dots,C_m\}\) be a measurable partition of a space \(\mathcal{Y}\). Define the coarse-graining map

\[ T_{\mathcal{P}}(y)=j \quad \text{iff } y\in C_j. \]

For any distributions \(r,s\) on \(\mathcal{Y}\),

\[ D_{\mathrm{KL}}(r\|s)\ \ge\ D_{\mathrm{KL}}(T_{\mathcal{P}\#}r\ \|\ T_{\mathcal{P}\#}s), \]

and \(T_{\mathcal{P}\#}r\) is just the discrete distribution over cells: \[ (T_{\mathcal{P}\#}r)(j)=r(C_j). \]

So partition entropy is simply Shannon entropy of that discrete pushforward: \[ H_{\mathcal{P}}(r)= -\sum_{j=1}^m r(C_j)\log r(C_j). \]

This is fully GAP-native: the “kernel” is \(T_{\mathcal{P}}\).

Where JL enters: pre-entropic geometry $\rightarrow$ stable partitions

Setup

You have data \(x\in\mathbb{R}^d\), and a JL map \(A:\mathbb{R}^d\to\mathbb{R}^k\), \(y=Ax\).

You then choose a partition \(\mathcal{P}\) in the reduced space \(\mathbb{R}^k\) (e.g., a grid, k-means Voronoi cells, ball cover, etc.).

The key move

Define the pulled-back partition on \(\mathbb{R}^d\): \[ \mathcal{P}^A := \{A^{-1}(C_1),\dots,A^{-1}(C_m)\}. \]

Then for any distribution \(p\) on \(\mathbb{R}^d\), \[ p(A^{-1}(C_j)) = (A_\#p)(C_j). \]

So the discrete coarse-grained distribution you get by:

is exactly the same as:

That’s the GAP-native identification: JL doesn’t define the kernel; the partition does. JL defines a convenient coordinate system in which you specify the kernel.

The KL-native statement with partitions

Let \(p,q\) be distributions on \(\mathbb{R}^d\). Consider the composed coarse-graining \[ T := T_{\mathcal{P}}\circ A. \]

Then \(T\) is a measurable forgetting map \(\mathbb{R}^d\to\{1,\dots,m\}\), and KL monotonicity gives:

\[ D_{\mathrm{KL}}(p\|q)\ \ge\ D_{\mathrm{KL}}(T_\#p\ \|\ T_\#q). \]

And \(T_\#p\) is exactly the cell-mass vector \[ (T_\#p)(j)=p(A^{-1}(C_j))=(A_\#p)(C_j). \]

So your partition entropy after JL is simply: \[ H(T_\#p)= -\sum_j (A_\#p)(C_j)\log (A_\#p)(C_j). \]

This is “full KL-native GAP”: the coarse-graining is the kernel \(T\), and KL is monotone under it.

Why JL is “pre-entropic” here, concretely

What JL buys you for partition entropy

Partition entropy depends on cell membership. Cell membership is stable when the partition is defined by geometric predicates (nearest center, grid thresholds, ball covers), and those predicates are stable when distances/inner products are stable.

JL’s role is:

The boundary-margin principle

The only points whose bin can change under small geometric distortion are those near cell boundaries.

So the right “GAP-native” condition is not mystical—it’s a margin condition:

That’s the operational content of “pre-entropic”: JL preserves the geometry well enough that the chosen entropic coarse-graining (the partition) behaves predictably.

Practical recipe in GAP-native terms

Conclusion

Under partition entropy, JL is pre-entropic in the most literal GAP sense: it’s a representation map that lets you define and compute a KL-native coarse-graining kernel \(T=T_{\mathcal{P}}\circ A\). The entropic object is the partition pushforward \(T_\#p\); JL’s job is to preserve enough geometry that this partitioning is meaningful and stable.

If you tell me what partition you have in mind—grid, k-means Voronoi, or ball cover—I’ll write the exact \(T\), the induced discrete distribution, and the clean “boundary mass controls entropy error” statement in your preferred notation.

Afterword: