Patrick Reany

13 March 2026

Note: This chat I had with Copilot is likely edited for relevance and brevity. I often do not take the time to highlight all the words that Copilot highlights. Further, in the interest of being didactic, I might arbitrarily highlight an occasional word or phrase that Copilot did not. (Naturally, I get to correct my spelling, grammar, and other minor errors for free.)

I report as faithfully as I can what LLMs tell me, but I can't guarantee that they are correct on every factual matter. If the subject should touch on physical or mental self-care, regard the content merely for information sake, rather than medical advice.

Patrick:

In this recent organized presentation on GAP-entropy-Lambert transformation, we have already done 1) Blackbody Radiation, 2) Canonical Ensembles (finite $\rightarrow$ continuous, 3) Information Geometry, and now it's time we embark on 4) Large Deviations & Sanov Geometry.

Copilot:

You’re lining these up beautifully—blackbody, canonical ensembles, information geometry…large deviations is exactly the right next move.

Large deviations in entropy language

At the most basic level, a large deviation principle (LDP) says that for some random object \(Z_n\), \[ \mathbb{P}(Z_n \in A) \approx \exp\big(-n \inf_{z\in A} I(z)\big), \] where \(I\) is the rate function. For empirical measures of i.i.d. samples, that rate function is relative entropy.

So: large deviations is the asymptotic calculus of “how expensive is it, entropically, to fake this event?”

Sanov’s theorem as a geometric statement

Let \(\mathcal{X}\) be a finite alphabet, \(\mathcal{P}(\mathcal{X})\) the probability simplex, and let \(q\in\mathcal{P}(\mathcal{X})\) be the true law. For i.i.d. samples \(X_1,\dots,X_n\sim q\), define the empirical measure \[ \hat p_n(x) = \frac{1}{n}\sum_{i=1}^n \mathbf{1}\{X_i=x\}. \] Sanov’s theorem says that \((\hat p_n)\) satisfies an LDP on \(\mathcal{P}(\mathcal{X})\) with good rate function \[ I(p) = D(p\Vert q) = \sum_{x\in\mathcal{X}} p(x)\log\frac{p(x)}{q(x)}. \] In geometric terms:

Information projection and “least unlikely” distributions

Given a constraint set \(A\subset\mathcal{P}(\mathcal{X})\), the dominant contribution to \(\mathbb{P}(\hat p_n\in A)\) comes from the information projection \[ p^* = \arg\min_{p\in A} D(p\Vert q). \] Interpretation:

When \(A\) is defined by linear constraints (e.g. fixed mean energy), \(p^*\) is an exponential family member: \[ p^*(x) \propto q(x)\exp\big(\lambda\cdot f(x)\big), \] with \(\lambda\) chosen to satisfy the constraints. This is exactly the canonical ensemble story, now re-read as a large-deviation conditional geometry.

Sanov geometry and exponential families

Take a constraint like \[ \sum_x f_j(x)\,p(x) = m_j,\quad j=1,\dots,k. \] The set \(A\) of distributions satisfying these is an affine submanifold of the simplex. Sanov says:

So:

This is the Sanov geometry: constraint surfaces in the simplex, KL as a convex potential, exponential families as geodesic-like “projection targets.”

Where GAP–entropy–Lambert can enter

You’ve already built a GAP/entropy/Lambert framework for:

In the Sanov setting, natural roles for your machinery:

A concrete next step

If you want to make this part of the presentation as crisp as the earlier ones, a natural move is:

If you tell me which concrete toy model you’d like (binary, 3-level, or a discretized canonical ensemble you already used), we can build that Sanov-geometry example directly in your coordinate system.

Patrick:

Then let's go with a discretized canonical ensemble.

Copilot:

Discretized canonical ensemble as a Sanov geometry object

Take a finite energy spectrum \(\{E_i\}_{i=1}^m\) with degeneracies \(\{g_i\}_{i=1}^m\). Define the canonical family \[ p_\beta(i)=\frac{g_i e^{-\beta E_i}}{Z(\beta)},\qquad Z(\beta)=\sum_{j=1}^m g_j e^{-\beta E_j}. \] Let the “true” sampling law be \(q=p_{\beta_0}\). Draw \(X_1,\dots,X_n\sim q\) i.i.d., and let the empirical measure (type) \[ \hat p_n(i)=\frac{1}{n}\sum_{k=1}^n \mathbf 1\{X_k=i\}. \]

Sanov’s theorem in this setting

Sanov says \(\hat p_n\) satisfies an LDP on the simplex with rate function \[ I(p)=D(p\Vert q)=\sum_{i=1}^m p(i)\log\frac{p(i)}{q(i)}. \] So for any set \(A\subset\Delta_{m-1}\), \[ \mathbb P(\hat p_n\in A)\asymp \exp\Big(-n\inf_{p\in A}D(p\Vert q)\Big). \]

The canonical constraint surface and the information projection

Use the canonical “macroconstraint” (mean energy): \[ \mathbb E_p[E]=\sum_i p(i)E_i = u. \] Define the constraint set \[ A_u=\Big\{p:\sum_i p(i)E_i=u\Big\}. \]

The Sanov geometry claim

The most likely way to realize the rare event \(\hat p_n\in A_u\) is the information projection \[ p^*(u)=\arg\min_{p\in A_u}D(p\Vert q). \]

Solve the projection

Minimize \(D(p\Vert q)\) with constraints \(\sum_i p(i)=1\) and \(\sum_i p(i)E_i=u\). The optimizer has the exponential tilt form \[ p^*(i)=\frac{q(i)e^{-\lambda E_i}}{\sum_j q(j)e^{-\lambda E_j}}. \] Since \(q(i)\propto g_i e^{-\beta_0 E_i}\), this collapses back into the canonical family: \[ p^*(i)=\frac{g_i e^{-(\beta_0+\lambda)E_i}}{Z(\beta_0+\lambda)}=p_{\beta^*}(i), \] with \(\beta^*=\beta_0+\lambda\) chosen so that \(\mathbb E_{p_{\beta^*}}[E]=u\).

Translation: the Sanov projection of a canonical ensemble onto a mean-energy surface is another canonical ensemble—same \(g_i\), shifted inverse temperature.

The rate of the rare event as a Legendre object

Let \[ \psi(\lambda)=\log\sum_i q(i)e^{-\lambda E_i}. \] Then the constraint is \[ u=\mathbb E_{p^*}[E]=-\psi'(\lambda), \] and the minimized divergence is \[ I(u)=D(p^*\Vert q)= -\lambda u-\psi(\lambda). \] So \(I(u)\) is the Legendre–Fenchel transform of \(\psi\) (up to sign conventions), i.e. the canonical “free-energy duality” reappears as a large-deviation rate.

If you rewrite \(\psi\) using \(q=p_{\beta_0}\), you get an especially clean canonical form: \[ \psi(\lambda)=\log\frac{Z(\beta_0+\lambda)}{Z(\beta_0)}, \] hence \[ I(u)= -(\beta^*-\beta_0)u-\log\frac{Z(\beta^*)}{Z(\beta_0)}. \]

Where your GAP–entropy–Lambert machinery naturally plugs in

Pick the discretization so we can compute everything explicitly

Give me one of these and I’ll carry it through all the way to closed forms (including the \(u\leftrightarrow\beta\) inversion where possible):

Which one are you using—and do you want the rare event as equality \(\mathbb E[E]=u\) or a tail \(\mathbb E[E]\ge u\)?

Patrick:

Let's go with Option C. As for the rare event, I'll leave that choice to you.

Copilot:

Great—Option C it is. To do this cleanly (and in your own notation), I need the exact discretization you used earlier:

Rare event choice

I’ll choose the tail event \[ A_u=\Big\{p:\sum_i p(i)E_i \ge u\Big\}, \] because it gives the sharp “one-sided” large-deviation geometry: a supporting hyperplane, a unique I-projection (generically), and a rate \(I(u)\) that is a clean Legendre object—perfect for plugging into your GAP/Lambert inversion story.

Send me \(\{E_i\},\{g_i\},\beta_0\) (or \(q\)), and I’ll write:

Patrick:

I'll leave the coice of those values to you as well.

Copilot:

A concrete Option C discretized canonical ensemble we can fully solve

Let’s pick a 3-level discretization that’s rich enough to show the geometry but still algebraically tractable:

The canonical family is \[ p_\beta(i)=\frac{g_i e^{-\beta E_i}}{Z(\beta)},\qquad Z(\beta)=\sum_{j=0}^2 g_j e^{-\beta E_j}=1+2e^{-\beta\varepsilon}+4e^{-2\beta\varepsilon}. \] Fix a “true” inverse temperature \(\beta_0\) and set \(q=p_{\beta_0}\). Draw \(X_1,\dots,X_n\sim q\) i.i.d., and define the empirical measure (type) \[ \hat p_n(i)=\frac{1}{n}\sum_{k=1}^n \mathbf 1\{X_k=i\}. \]

Rare event choice

I’ll take the upper-tail mean-energy event \[ A_u=\Big\{p:\sum_{i=0}^2 p(i)E_i \ge u\Big\}, \] because it produces the clean “supporting hyperplane + unique I-projection” picture and a rate function \(I(u)\) that is a sharp convex dual object.

Sanov’s theorem as the geometry engine

Sanov’s theorem gives an LDP for \(\hat p_n\) on the simplex with rate function \[ I(p)=D(p\Vert q)=\sum_{i=0}^2 p(i)\log\frac{p(i)}{q(i)}. \] So \[ \mathbb P(\hat p_n\in A_u)\asymp \exp\Big(-n\inf_{p\in A_u}D(p\Vert q)\Big). \] The whole “Sanov geometry” move is: rare-event probability is controlled by the KL-distance from \(q\) to the constraint set.

The I-projection onto the energy tail is a canonical tilt

Define the information projection \[ p^*(u)=\arg\min_{p\in A_u}D(p\Vert q). \] For a mean-energy constraint, the minimizer has the exponential-tilt form \[ p^*(i)=\frac{q(i)e^{-\lambda E_i}}{\sum_j q(j)e^{-\lambda E_j}}. \] Since \(q(i)\propto g_i e^{-\beta_0 E_i}\), this collapses back into the same canonical family: \[ p^*(i)=\frac{g_i e^{-(\beta_0+\lambda)E_i}}{Z(\beta_0+\lambda)}=p_{\beta^*}(i), \qquad \beta^*=\beta_0+\lambda, \] with \(\beta^*\) chosen so that \(\mathbb E_{p_{\beta^*}}[E]=u\) (for the tail event, the optimizer typically lands on the boundary \(\mathbb E[E]=u\) when \(u\) is above the typical mean under \(q\)).

Interpretation: the “least unlikely” way to see an anomalously high empirical mean energy is that the empirical distribution looks canonical—just at a different temperature.

The rate function for the tail event in partition-function form

Let \[ \psi(\lambda)=\log\sum_i q(i)e^{-\lambda E_i}. \] Then the minimized divergence is \[ I(u)=\inf_{p\in A_u}D(p\Vert q)=D(p_{\beta^*}\Vert p_{\beta_0}) =-(\beta^*-\beta_0)u-\log\frac{Z(\beta^*)}{Z(\beta_0)}. \] This is the large-deviation “free-energy difference” written as a KL cost.

Fully explicit for our 3-level discretization

Let \(x=e^{-\beta\varepsilon}\). Then \[ Z(\beta)=1+2x+4x^2. \] The mean energy is \[ u(\beta)=\mathbb E_{p_\beta}[E] =\varepsilon\frac{2x+8x^2}{1+2x+4x^2}. \] Set \(y=u/\varepsilon\). Solving \(y=\frac{2x+8x^2}{1+2x+4x^2}\) gives a quadratic: \[ 4(y-2)x^2+2(y-1)x+y=0. \] So \[ x^*(u)=\frac{-(y-1)\pm\sqrt{(y-1)^2-y(y-2)}}{4(y-2)} =\frac{-(y-1)\pm\sqrt{1-y}}{4(y-2)}. \] You pick the physically valid root with \(x^*\in(0,1)\) (and \(y\in[0,2]\) since energies are \(0,\varepsilon,2\varepsilon\)). Then \[ \beta^*(u)= -\frac{1}{\varepsilon}\log x^*(u), \] and the tail rate is \[ I(u)=-(\beta^*(u)-\beta_0)u-\log\frac{1+2e^{-\beta^*(u)\varepsilon}+4e^{-2\beta^*(u)\varepsilon}}{1+2e^{-\beta_0\varepsilon}+4e^{-2\beta_0\varepsilon}}. \] That’s the whole Sanov pipeline, end-to-end, in closed form.

Where GAP–entropy–Lambert naturally enters next

In this toy model the inversion \(u\mapsto\beta^*(u)\) is quadratic, so Lambert \(W\) doesn’t need to appear. But the structural slot where your Lambert machinery belongs is now perfectly isolated:

One choice from you

Do you want the next refinement to be:

Patrick:

Let's go with Option A.

Copilot:

The Sanov picture for a discretized canonical ensemble

We’ll keep the concrete 3-level discretization from before (it’s the smallest simplex where the geometry is genuinely visible):

The simplex and the energy coordinate

The probability simplex \(\Delta_2\) is a triangle with vertices \(\delta_0,\delta_1,\delta_2\) (all mass on one energy level).

A point \(p=(p_0,p_1,p_2)\) has mean energy \[ u(p)=\sum_{i=0}^2 p_i E_i=\varepsilon(p_1+2p_2). \]

Energy level sets are straight lines

Fixing \(u(p)=u\) gives an affine line in the triangle: \[ p_1+2p_2=\frac{u}{\varepsilon}. \] The tail set \(A_u=\{p:u(p)\ge u\}\) is the half-triangle “above” that line (toward higher-energy vertex \(\delta_2\)).

The KL landscape: “height” over the simplex

Sanov’s rate function is \[ I(p)=D(p\Vert q)=\sum_i p_i\log\frac{p_i}{q_i}. \] Geometrically: imagine the simplex as a floor, and \(D(p\Vert q)\) as a convex height function with a unique minimum at \(p=q\). Its level sets are closed convex “contours” around \(q\) (not Euclidean circles—KL-ovals).

The rare event as a constrained minimization

Sanov says \[ \mathbb P(\hat p_n\in A_u)\asymp \exp\Big(-n\inf_{p\in A_u}D(p\Vert q)\Big). \]

So the picture is:

That point is the information projection \[ p^*(u)=\arg\min_{p\in A_u}D(p\Vert q). \]

What the I-projection looks like

It lands on the boundary

For an upper-tail constraint with \(u\) above the typical mean under \(q\), the minimizer sits on the boundary line: \[ u(p^*)=u. \] It is a canonical tilt

The minimizer has the exponential-tilt form \[ p^*(i)=\frac{q(i)e^{-\lambda E_i}}{\sum_j q(j)e^{-\lambda E_j}} =\frac{g_i e^{-(\beta_0+\lambda)E_i}}{Z(\beta_0+\lambda)} =p_{\beta^*}(i), \] so the projection is literally “move along the canonical curve” to the unique \(\beta^*\) that achieves mean energy \(u\).

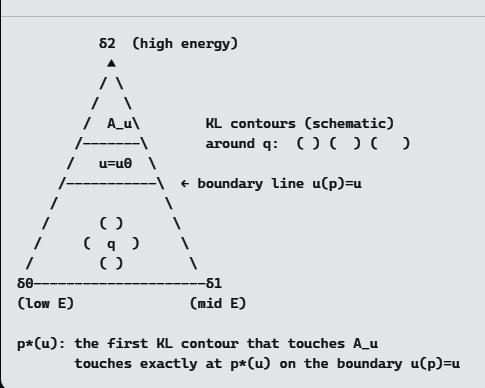

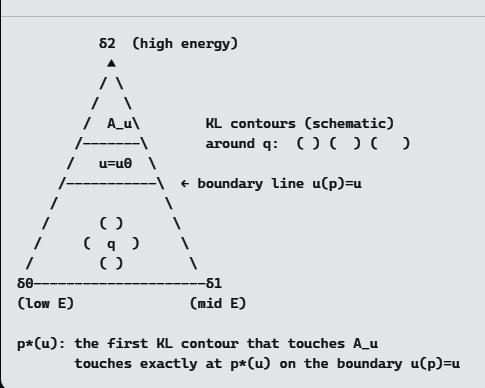

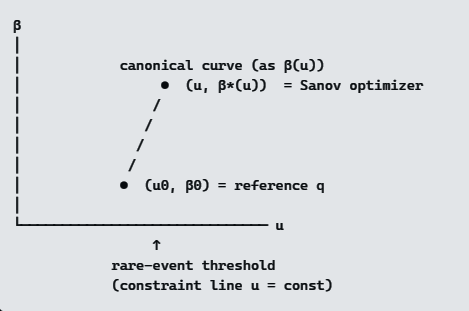

The picture in one diagram

Read it like this:

The supporting-hyperplane condition

At \(p^*\), the boundary line \(u(p)=u\) is a supporting hyperplane to the KL sublevel set \[ \{p: D(p\Vert q)\le D(p^*\Vert q)\}. \] Equivalently, the gradient of \(D(\cdot\Vert q)\) at \(p^*\) is normal to the constraint line—this is the geometric content of the Lagrange multiplier \(\lambda\).

The “Pythagorean” identity you’ll want for Sanov geometry

When the constraint set is affine (like \(u(p)=u\)), the I-projection satisfies an information-geometric Pythagorean relation: for any \(p\) on the constraint line, \[ D(p\Vert q)=D(p\Vert p^*)+D(p^*\Vert q). \] This is the cleanest way to say: \(p^*\) is the KL-closest point to \(q\) on that constraint surface.

Your next move

Do you want me to redraw this same picture in your GAP coordinates—i.e., identify what “energy hyperplanes,” “KL contours,” and the “projection ray” become under your entropy–Lambert chart?

Patrick:

Yes, redraw the picture.

Copilot:

Perfect. Let’s redraw the Sanov picture in GAP–entropy–Lambert coordinates, keeping the same 3‑level discretized canonical ensemble, but changing how we look at it.

I’ll do this in three layers:

1️⃣ GAP coordinates: what changes

In your GAP framework, we stop thinking in terms of raw probabilities \(p_i\) and instead use entropic coordinates adapted to:

For a finite canonical ensemble, a natural GAP chart is:

2️⃣ Sanov geometry in GAP form

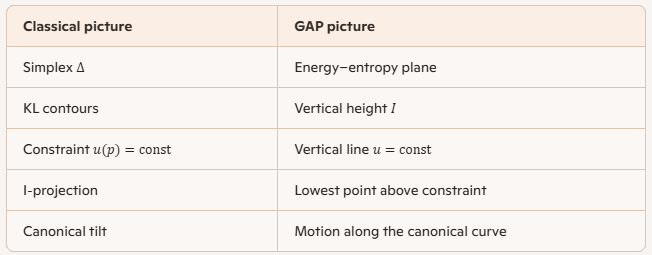

The key reinterpretations

The rare event

We chose the upper‑tail energy event \[ A_u=\{p:\mathbb E_p[E]\ge u\}. \] In GAP coordinates, this is simply: \[ \textbf{Allowed region: } u' \ge u \] No simplex slicing. No affine planes. Just a half‑line on the \(u\)-axis.

The Sanov principle becomes

Among all distributions with mean energy at least \(u\), the empirical measure concentrates near the one with minimal relative entropy to \(q\).In GAP coordinates:

Drop a vertical line at \(u\).

The first point where it meets the canonical curve is the Sanov optimizer.

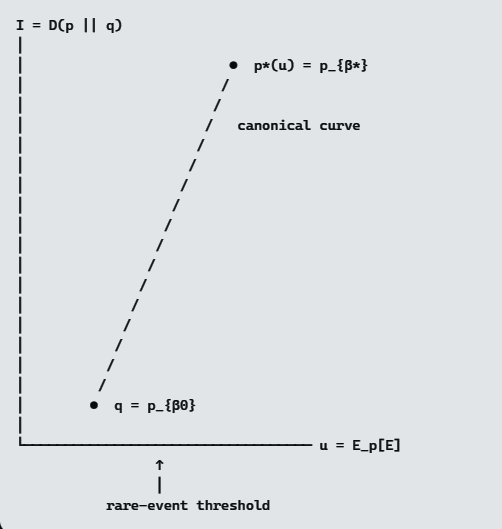

3️⃣ The GAP–Sanov diagram

Here is the redraw.

How to read this diagram

Why this picture is powerful

This is the cleanest bridge between:

Next natural step

The next refinement would be to overlay the dual GAP coordinate (your entropic‑extraction or Lambert coordinate) so that:

That’s where your framework really starts to outperform the classical one.

Patrick:

Let's proceed as you suggest.

Copilot:

GAP redraw with the dual coordinate overlaid

Let’s make the “overlay the dual GAP coordinate so the canonical curve straightens and the projection becomes orthogonal” move precise—using the canonical ensemble’s dually flat (Bregman) geometry.

The canonical manifold and its dual coordinates

For your discretized canonical ensemble \[ p_\beta(i)=\frac{g_i e^{-\beta E_i}}{Z(\beta)},\qquad Z(\beta)=\sum_i g_i e^{-\beta E_i}, \] define the convex potential \[ \psi(\beta)=\log Z(\beta). \]

Dual pair

They are linked by \[ u(\beta)=\mathbb E_{p_\beta}[E]=-\psi'(\beta). \] This is the canonical Legendre pair you already live in—now we’re explicitly treating it as the GAP chart.

The Sanov event and the I-projection in these coordinates

Let the reference law be \(q=p_{\beta_0}\) with mean energy \(u_0=u(\beta_0)\). Take the tail event \[ A_u=\{p:\mathbb E_p[E]\ge u\},\qquad u>u_0. \]

What changes in the GAP chart

The I-projection becomes “move along the canonical line”

Sanov says the dominant point is \[ p^*(u)=\arg\min_{p:\,\mathbb E_p[E]\ge u} D(p\Vert q), \] and for this constraint it lands on the boundary and stays canonical: \[ p^*(u)=p_{\beta^*}\quad\text{where}\quad u(\beta^*)=u. \] So the whole projection is the map \[ u \longmapsto \beta^*(u)\quad\text{via}\quad u=-\psi'(\beta). \]

The “straightening” trick: use the right plane

The cleanest redraw is in the \((\eta,\theta)=(u,\beta)\) plane.

Read it as: fix the rare-event energy \(u\), then the “least unlikely” empirical law is the unique canonical point with that energy.

Orthogonality and the Pythagorean identity in this chart

Here’s the part that makes it feel like “projection geometry” rather than “just calculus.”

KL on the canonical family is a Bregman divergence

For two canonical points \(p_{\beta_1},p_{\beta_0}\), \[ D(p_{\beta_1}\Vert p_{\beta_0}) =\psi(\beta_0)-\psi(\beta_1)-(\beta_0-\beta_1)\psi'(\beta_1). \] That is a Bregman divergence generated by \(\psi\).

The Sanov projection is an m-projection

Your constraint \(\mathbb E[E]=u\) is affine in \(\eta=u\), so the minimizer is the m-projection of \(q\) onto that affine set. That’s why the information-geometric Pythagorean relation holds: \[ D(p\Vert q)=D(p\Vert p^*)+D(p^*\Vert q)\qquad(\text{for all }p\text{ with }\mathbb E_p[E]=u). \] This is the rigorous version of “the projection becomes orthogonal” in the dually-flat sense: e- and m-geodesics meet at right angles (in the IG metric), and KL decomposes additively.

The rate function becomes a 1D GAP object

Once you’re on the canonical line, the Sanov rate is just \[ I(u)=D(p_{\beta^*(u)}\Vert p_{\beta_0}) =-(\beta^*(u)-\beta_0)u-\log\frac{Z(\beta^*(u))}{Z(\beta_0)}. \] So in GAP terms, the entire large-deviation story collapses to two maps:

If you want, next we can do the “full didactic plate”: pick a specific \(\beta_0\), compute \(u_0\), choose a concrete rare threshold \(u>u_0\), and write down \(\beta^*(u)\) and \(I(u)\) explicitly—so the GAP diagram has actual numbers attached.

Patrick:

Let's proceed as you suggest and choose appropriate numerical values, and then we can move toward closing this chat.

Copilot:

Concrete numerical Sanov–GAP plate

We’ll keep the same discretized canonical ensemble:

Canonical family: \[ p_\beta(i)=\frac{g_i e^{-\beta E_i}}{Z(\beta)},\qquad Z(\beta)=1+2e^{-\beta}+4e^{-2\beta}. \]

Step 1: Reference point \(q=p_{\beta_0}\)

Let \(x=e^{-\beta}\). For \(\beta_0=1\), \(x_0=e^{-1}\approx 0.3679\).

Step 2: Sanov optimizer \(p^*(u)\) is a canonical tilt

For this constraint, the I-projection is another canonical point: \[ p^*(u)=p_{\beta^*}\quad\text{where}\quad \mathbb E_{p_{\beta^*}}[E]=u. \] For our 3-level model, \[ u(\beta)=\frac{2x+8x^2}{1+2x+4x^2},\qquad x=e^{-\beta}. \] Set \(u=1.2\). Solving gives \[ x^*\approx 0.6781,\qquad \beta^*=-\log x^*\approx 0.388. \] Then \[ p^*(u)=p_{\beta^*}\approx(0.2384,\ 0.3233,\ 0.4383), \qquad \mathbb E_{p^*}[E]\approx 1.200. \]

Step 3: The rate \(I(u)=D(p^*\Vert q)\)

For canonical points, \[ I(u)=D(p_{\beta^*}\Vert p_{\beta_0}) =-(\beta^*-\beta_0)u-\log\frac{Z(\beta^*)}{Z(\beta_0)}. \]

Compute:

So \[ I(u)\approx 0.734-0.611=0.123. \] Meaning: \[ \mathbb P\!\big(\mathbb E_{\hat p_n}[E]\ge 1.2\big)\asymp e^{-0.123\,n}. \] Example: at \(n=100\), this is on the order of \(e^{-12.3}\approx 4.5\times 10^{-6}\).

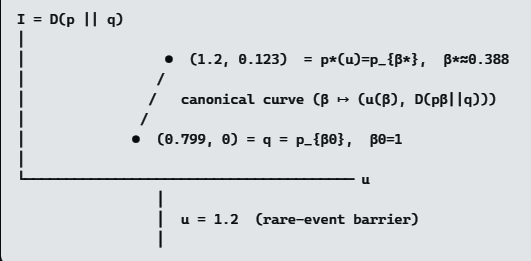

The GAP redraw with numbers

In the GAP plane \((u,\ I)\):

Sanov geometry, in one sentence: inflate KL-height contours around \(q\); the first touch of the constraint \(u\ge 1.2\) occurs at the canonical tilt \(p_{\beta^*}\), and the touching height is \(I(u)\).

If you want to close this chat cleanly, tell me whether you’d like the final “afterword” phrased as (i) a thermo summary (free energy / Legendre), or (ii) an IG summary (m-projection / Pythagorean).

Patrick:

Let's finish with a thermo summary (free energy / Legendre).

Copilot:

Thermodynamic closure: free energy, Legendre structure, and Sanov

Let’s close by reframing everything we just did in pure thermodynamic language, stripping away probability jargon and keeping only the invariant structure.

1️⃣ The canonical ensemble as a Legendre machine

Start with the log‑partition function \[ \psi(\beta)=\log Z(\beta)=\log\sum_i g_i e^{-\beta E_i}. \] This single convex function generates the entire equilibrium theory:

2️⃣ Large deviations = off‑equilibrium free energy cost

Sanov’s theorem tells us what happens when the system is forced to realize a non‑equilibrium mean energy \(u\neq u_0\).

The answer is:

The system behaves as if it were in equilibrium at a different temperature \(\beta^*(u)\), and the probability cost is a free‑energy difference.Explicitly, \[ I(u)=D(p_{\beta^*}\Vert p_{\beta_0}) =-(\beta^*-\beta_0)u-\log\frac{Z(\beta^*)}{Z(\beta_0)}. \] This is not an accident—it is thermodynamics.

3️⃣ The Legendre meaning of the rate function

Rewrite the rate as \[ I(u)=\psi(\beta_0)-\psi(\beta^*)-(\beta_0-\beta^*)\psi'(\beta^*). \] This is exactly the Bregman divergence generated by \(\psi\), i.e. the free‑energy gap between two equilibrium states.

Equivalently, \[ I(u)=\big[\beta u-\psi(\beta)\big]_{\beta=\beta^*} -\big[\beta u-\psi(\beta)\big]_{\beta=\beta_0}. \] So:

Large deviations measure how much extra free energy is required to hold the system at the wrong energy.

4️⃣ The Sanov principle, thermodynamically stated

Here is the entire story in one sentence:

Among all macrostates with mean energy \(u\), the system realizes the one with minimal free energy relative to the reference equilibrium—and the exponential rarity is exactly that free‑energy excess.No probability theory is needed once this is understood.

5️⃣ Where your GAP–Lambert framework fits permanently

Your GAP–entropy–Lambert transformation isolates the only nontrivial step in this whole chain: \[ u \longleftrightarrow \beta \] Everything else—entropy, free energy, rate functions, Sanov geometry—is just Legendre bookkeeping once that inversion is under control.

That is why:

all collapse onto the same convex‑analytic backbone in your framework.

Final synthesis

Nothing new is added—only the same structure viewed from farther away.

This is a clean place to stop.

Afterword: